Campbell Brown, a prominent figure known for her extensive career chasing accurate information, first as a renowned television journalist and later as Facebook’s inaugural and sole dedicated news chief, is now confronting a new frontier in the battle for truth: artificial intelligence. Observing the rapid evolution of AI and its profound impact on how individuals consume information, Brown perceives a potential for history to repeat itself, mirroring the societal challenges posed by misinformation on social media platforms. This time, however, she is not content to wait for others to address the looming crisis; she is actively building a solution.

Her latest venture, Forum AI, established 17 months ago in New York, is at the forefront of evaluating how foundation models perform on what she terms "high-stakes topics." These critical domains encompass geopolitics, mental health, finance, and hiring – subjects characterized by their inherent complexity, nuance, and lack of clear-cut yes-or-no answers. Brown elaborated on Forum AI’s mission during a recent TechCrunch StrictlyVC evening in San Francisco with Tim Fernholz, underscoring the company’s commitment to ensuring AI models provide reliable and contextually rich information in areas where accuracy is paramount.

A Career Defined by the Pursuit of Truth

Brown’s journey into the intricate world of AI governance is deeply rooted in her professional history. Before her foray into the tech industry, she distinguished herself as a respected television journalist, anchoring shows on networks such as NBC News and CNN. During this period, her work often centered on investigative reporting and delivering factual news, earning her a reputation for rigor and a commitment to journalistic integrity. This foundational experience instilled in her a profound understanding of the critical role that accurate information plays in a functioning society and democracy.

Her transition to Facebook (now Meta) in 2017 marked a pivotal shift, placing her at the nexus of technology and media. As the company’s Head of News Partnerships, Brown was tasked with navigating the increasingly complex landscape of digital news consumption, combating the proliferation of misinformation, and fostering a healthier information ecosystem on the platform. This role provided her with an unparalleled vantage point to witness the systemic challenges associated with scaling information, the perils of algorithms optimized primarily for engagement, and the immense difficulty in rectifying widespread inaccuracies once they take root. Despite her efforts, including the development of a fact-checking program, she candidly admits that many initiatives "failed" and that optimizing for engagement often proved "lousy for society," leaving many users less informed rather than more. This period of intense learning and, at times, disillusionment, laid the groundwork for her current mission.

The Catalyst: ChatGPT and the Dawn of a New Information Era

The inspiration for Forum AI emerged from a specific, almost existential moment for Brown. "I was at Meta when ChatGPT was first released publicly," she recounted. "And I remember really shortly after realizing this is going to be the funnel through which all information flows. And it’s not very good." The immediate implications of this realization, particularly for her own children, spurred her into action. "My kids are going to be really dumb if we don’t figure out how to fix this," she recalled thinking, highlighting the personal urgency driving her professional endeavor.

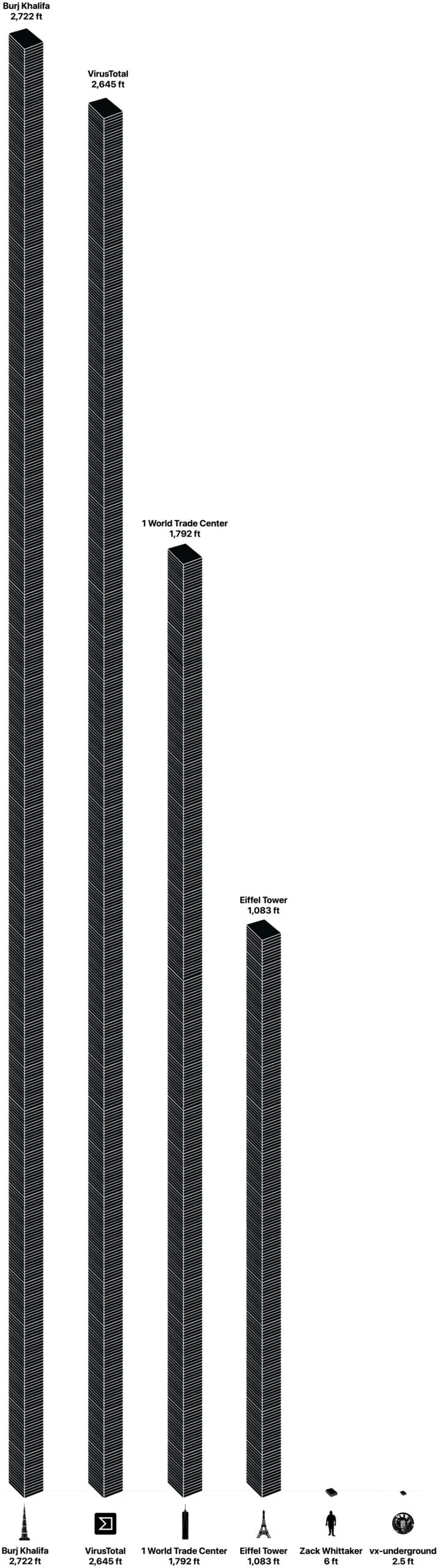

The public release of ChatGPT in late 2022 by OpenAI marked a significant inflection point in the technological landscape, thrusting generative AI into mainstream consciousness. While lauded for its remarkable capabilities in generating human-like text, translating languages, and writing various kinds of creative content, it also quickly became apparent that these models were prone to "hallucinations"—generating factually incorrect or nonsensical information with high confidence. Early users reported instances of AI chatbots confidently asserting false historical facts, providing erroneous medical advice, or fabricating legal precedents, underscoring a fundamental challenge in their design and training. This inherent fallibility, particularly in nuanced and critical domains, became the core problem Forum AI was designed to address.

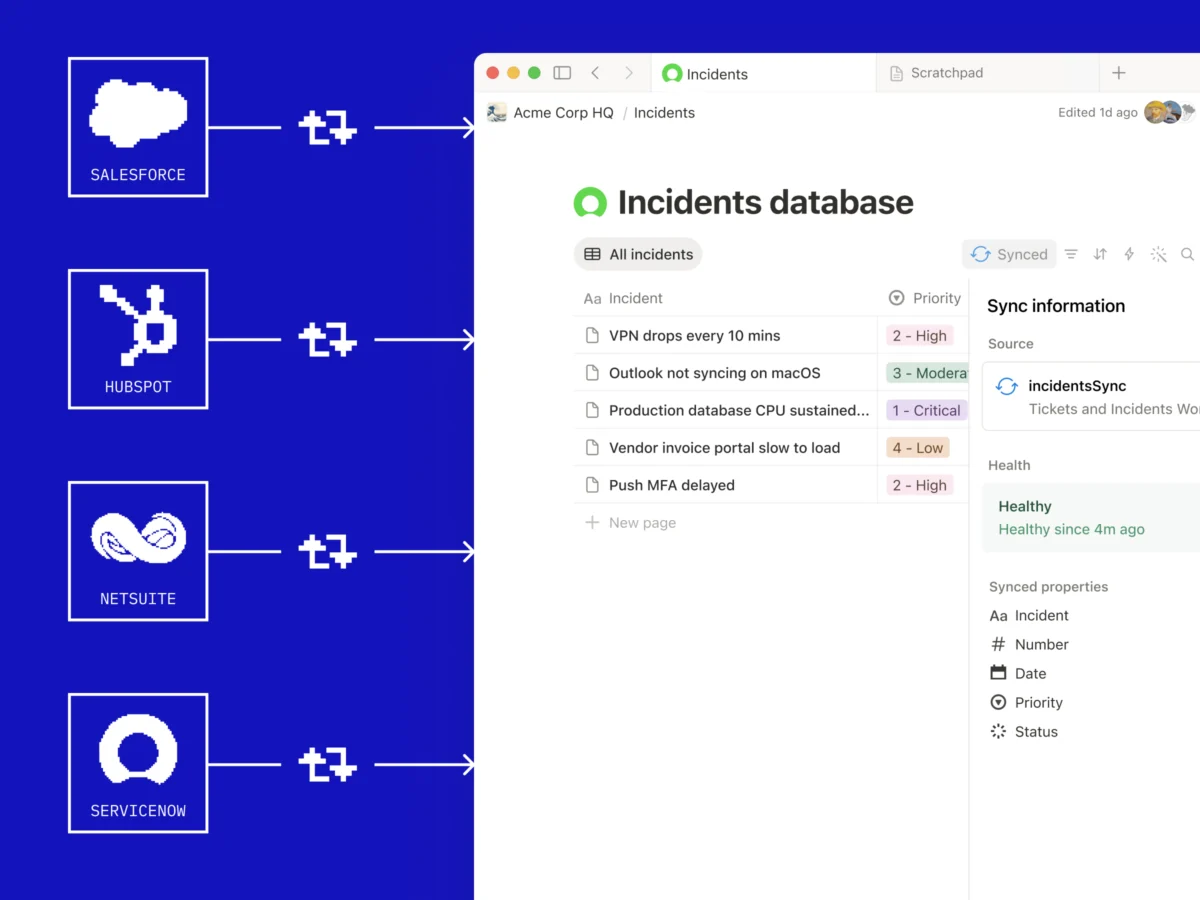

Forum AI’s Innovative Approach: Human Expertise Meets AI Evaluation

Forum AI’s methodology is built on a novel hybrid model that marries unparalleled human expertise with scalable AI evaluation. The company’s innovative process begins by identifying the world’s foremost experts in specific high-stakes domains. These experts are then tasked with architecting comprehensive benchmarks that go beyond simple factual recall, designed instead to assess an AI model’s ability to understand nuance, context, and multiple perspectives within complex topics. For its geopolitics work, Brown has assembled an impressive roster of luminaries, including Niall Ferguson, a distinguished historian; Fareed Zakaria, a prominent journalist and author; former Secretary of State Tony Blinken; former House Speaker Kevin McCarthy; and Anne Neuberger, who previously led cybersecurity in the Obama administration. This diverse group represents a spectrum of thought and experience, ensuring a robust and multidimensional assessment framework.

Once these expert-designed benchmarks are established, Forum AI trains specialized AI judges to evaluate models at scale. The ambitious goal is to achieve approximately 90% consensus between these AI judges and the human experts, a threshold Brown states Forum AI has successfully reached. This iterative process of expert-led benchmark creation and AI-driven evaluation is critical for moving beyond superficial assessments and delving into the deeper complexities of information integrity that often elude conventional metrics. The underlying principle is that while AI can process vast amounts of data quickly, human judgment remains indispensable for discerning truth, nuance, and potential bias in sophisticated informational contexts.

Preliminary Findings: A Long Way to Go for AI Accuracy

Forum AI’s initial evaluations of leading foundation models have yielded findings that Brown describes as far from encouraging, reinforcing her conviction that the industry has a significant journey ahead. She cited troubling instances, such as Google’s Gemini AI model drawing from Chinese Communist Party websites for stories that had "nothing to do with China," raising serious concerns about source integrity and potential ideological influence. Furthermore, nearly all models exhibited a discernible left-leaning political bias, highlighting a pervasive issue in the training data and algorithmic design that can subtly shape the information users receive.

Beyond overt inaccuracies and biases, Forum AI uncovered subtler, yet equally insidious, failures. These include models missing crucial context, overlooking diverse perspectives, and engaging in "straw-manning arguments without acknowledgment"—a rhetorical fallacy where an opponent’s argument is misrepresented to make it easier to attack. These nuanced failures are particularly problematic in high-stakes domains, where incomplete or skewed information can lead to misinformed decisions with significant consequences. Brown conceded, "There’s a long way to go," but also expressed optimism, stating, "I also think that there are some very easy fixes that would vastly improve the outcomes." These potential fixes likely involve more diverse and carefully curated training data, enhanced fine-tuning with expert feedback, and a greater emphasis on ethical AI development practices.

The Echoes of Social Media: Learning from Past Mistakes

Brown draws direct parallels between the current challenges facing AI and her experiences at Facebook, where she witnessed firsthand the detrimental effects of optimizing for the wrong metrics. On social media platforms, the relentless pursuit of "engagement"—likes, shares, comments—often inadvertently amplified sensationalism, division, and ultimately, misinformation. The fact-checking program she helped build at Facebook, designed to combat this very issue, no longer exists, a testament to the immense difficulty of imposing truth and accuracy onto systems inherently designed for virality. The lesson, often ignored by the social media industry, is that prioritizing engagement above all else has been "lousy for society" and has left many individuals less informed and more polarized.

This historical context is crucial for understanding Brown’s urgency with AI. She views generative AI not merely as a new technology but as a potential repeat of the information crisis, albeit on an even grander scale. The hope is that AI, unlike social media, can break this cycle. "Right now it could go either way," she posited, suggesting that companies have a choice: either cater to user preferences for novelty and sensationalism, or "give people what’s real and what’s honest and what’s truthful." While acknowledging that an idealistic vision of "AI optimizing for truth" might sound naive, she believes a powerful, pragmatic ally exists: enterprise.

The Enterprise Imperative: Liability and the Demand for Accuracy

Brown contends that businesses, rather than purely altruistic motives, will ultimately drive the demand for accurate and reliable AI. Companies leveraging AI for critical decisions—such as credit scoring, loan approvals, insurance assessments, and hiring processes—face substantial liability risks. Inaccurate or biased AI outputs in these sectors can lead to financial losses, legal challenges, reputational damage, and regulatory penalties. "They’re going to want you to optimize for getting it right," she asserted, highlighting the commercial imperative for precision and fairness.

This enterprise demand forms the bedrock of Forum AI’s business model. However, translating this compliance interest into consistent revenue presents its own set of challenges. Brown points out that much of the current market remains content with what she terms "checkbox audits" and standardized benchmarks, which she considers woefully inadequate for truly assessing complex AI systems. The regulatory landscape, though evolving, is still nascent and often ineffective. She candidly described the current compliance environment as "a joke," citing an instance where, after New York City passed the first hiring bias law requiring AI audits, the state comptroller found that more than half of the audited systems had undetected violations. This demonstrates a significant gap between regulatory intent and practical enforcement. Real evaluation, Brown argues, necessitates deep domain expertise to meticulously examine not only known scenarios but also obscure "edge cases" that can lead to unforeseen trouble. This rigorous work requires significant time and specialized knowledge, making it clear that "smart generalists aren’t going to cut it."

The Disconnect: Silicon Valley’s Vision vs. User Reality

Forum AI, which successfully raised $3 million last fall in a round led by Lerer Hippeau, is uniquely positioned to highlight the significant disconnect between the AI industry’s self-image and the everyday reality for most users. Brown observes a stark contrast: "You hear from the leaders of the big tech companies, ‘This technology is going to change the world,’ ‘it’s going to put you out of work,’ ‘it’s going to cure cancer.’" Yet, this ambitious rhetoric often clashes with the user experience. "But then to a normal person who’s just using a chatbot to ask basic questions, they’re still getting a lot of slop and wrong answers," she elaborated.

This disparity fuels a pervasive skepticism among the general public. Trust in AI technologies currently sits at extraordinarily low levels, a sentiment Brown believes is, in many cases, entirely justified. Recent surveys consistently show that a significant portion of the population harbors concerns about AI’s reliability, ethical implications, and potential for job displacement. This lack of trust is a critical barrier to widespread, beneficial AI adoption. Brown concludes that "The conversation is sort of happening in Silicon Valley around one thing, and a totally different conversation is happening among consumers." Bridging this gap, she implies, requires not just technical advancements but a fundamental shift in how AI is developed, evaluated, and presented to the world, with accuracy and reliability at its core.

The work of Forum AI represents a crucial intervention in the unfolding narrative of artificial intelligence. By bringing human expert judgment to the forefront of AI evaluation, Campbell Brown and her team are striving to ensure that this transformative technology serves humanity by delivering truth and context, rather than merely replicating or amplifying the information challenges of the past. The stakes are undeniably high, impacting everything from global politics to individual financial well-being, underscoring the imperative for AI to be not just intelligent, but also truthful.