Artificial intelligence is not merely transforming industries and daily lives; it is simultaneously forging an entirely new language to articulate its rapid advancements. Navigating the discourse around AI often means encountering acronyms like LLMs, RAG, and RLHF, alongside terms such as ‘diffusion’ and ‘hallucination,’ which can leave even seasoned tech professionals feeling a sense of insecurity. This article aims to demystify this burgeoning lexicon, offering a living document that evolves with the field it describes, providing crucial context and clarity for understanding the forces shaping our future.

The current epoch of AI development, particularly the rise of generative AI, has necessitated a vocabulary that captures the nuances of increasingly sophisticated algorithms and systems. From the fundamental computational power that underpins these models to the aspirational goal of human-level intelligence, each term reflects a significant concept, challenge, or breakthrough. Understanding these definitions is paramount for anyone seeking to grasp the implications of AI’s pervasive influence, whether in technological innovation, economic shifts, or societal restructuring.

AGI (Artificial General Intelligence): The Elusive Horizon

Artificial General Intelligence, or AGI, remains one of the most debated and nebulous terms within the AI community. Generally, it refers to AI systems that possess cognitive capabilities comparable to, or exceeding, those of an average human across a wide spectrum of intellectual tasks. Unlike current narrow AI, which excels at specific functions like playing chess or recognizing images, AGI would theoretically demonstrate versatility, adaptability, and the ability to learn and apply knowledge across diverse domains.

Prominent figures and organizations offer slightly varied definitions, underscoring the term’s evolving nature. OpenAI CEO Sam Altman once envisioned AGI as "the equivalent of a median human that you could hire as a co-worker," suggesting a functional benchmark for collaborative intelligence. OpenAI’s official charter, however, defines AGI as "highly autonomous systems that outperform humans at most economically valuable work," highlighting a more expansive and economically impactful threshold. Google DeepMind contributes another perspective, viewing AGI as "AI that’s at least as capable as humans at most cognitive tasks." The slight differences in these definitions reflect ongoing internal and external discussions about what constitutes true general intelligence and how it might be measured or recognized. Despite these expert attempts at clarification, a universally accepted definition remains elusive, contributing to the term’s enigmatic quality and the sense that even those at the forefront of AI research grapple with its precise meaning and implications. The pursuit of AGI drives much of the foundational research and investment in the AI sector, promising unprecedented transformations but also raising profound ethical, safety, and existential questions.

AI Agent: Autonomous Action Beyond Chatbots

An AI agent represents a significant leap beyond the capabilities of a basic AI chatbot. It is a sophisticated tool designed to leverage AI technologies to perform a sequence of tasks autonomously on a user’s behalf. Unlike a chatbot that merely responds to queries, an AI agent can initiate and execute multi-step processes, such as managing expense reports, booking travel arrangements, securing restaurant reservations, or even engaging in complex software development cycles like writing and maintaining code.

The concept implies an autonomous system capable of drawing upon multiple underlying AI models and interacting with various external services to achieve a defined goal. While the infrastructure to fully realize the envisaged capabilities of AI agents is still under construction, the fundamental idea is one of proactive, intelligent execution. This emergent space is characterized by a high degree of innovation, with developers exploring how these agents can interpret user intent, plan actions, execute them through API endpoints, and learn from feedback. The potential for AI agents to streamline workflows and automate routine, and even complex, tasks across industries is immense, although it also introduces new challenges related to oversight, reliability, and security.

API Endpoints: The Digital Gateways for AI Automation

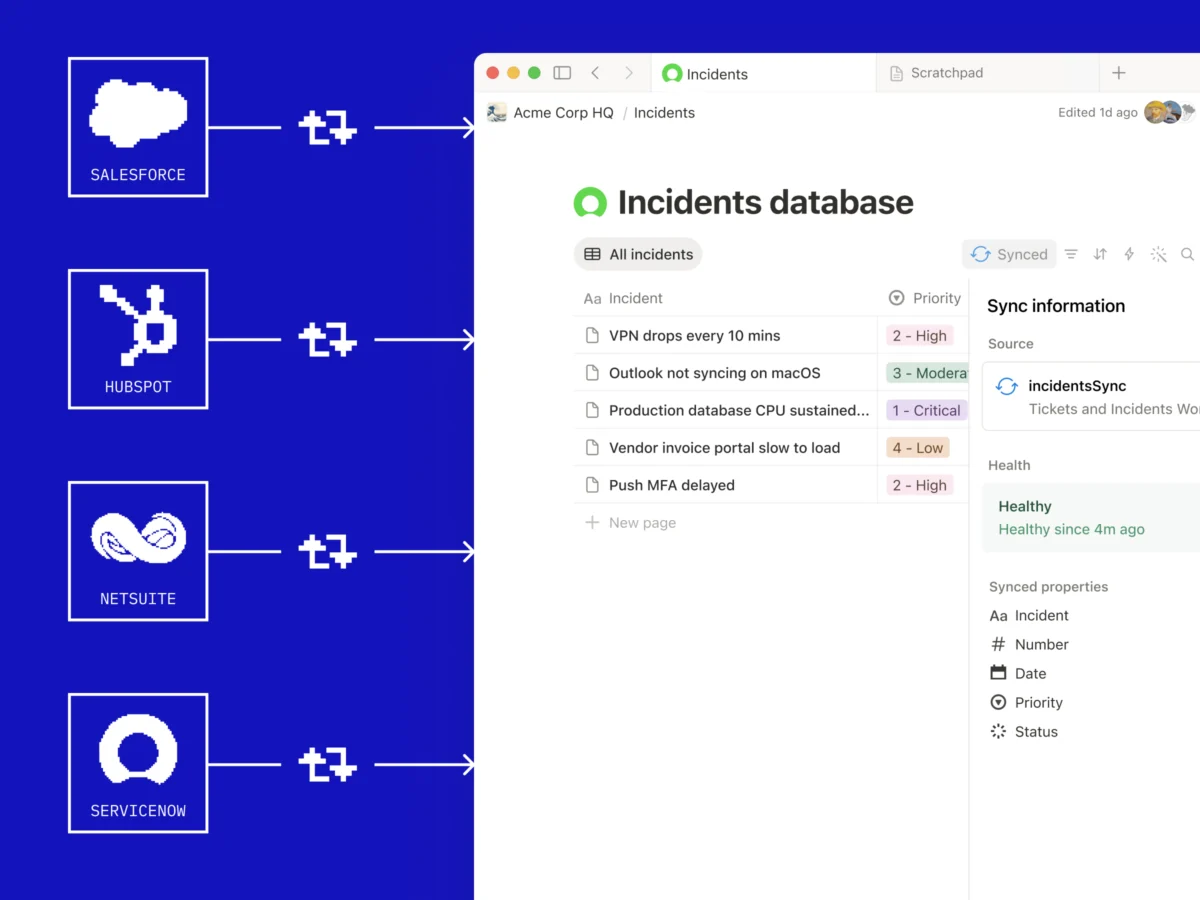

API endpoints can be conceptualized as the "buttons" or access points on the backend of a software application that other programs can "press" to invoke specific functions or retrieve data. These interfaces are fundamental to how developers construct integrations, enabling different applications to communicate and share information seamlessly. For instance, an application might use an API endpoint to pull weather data from a meteorological service or to process payments through a financial gateway.

In the context of AI, API endpoints are becoming increasingly crucial as AI agents grow in sophistication. They allow these autonomous systems to control third-party services directly, without requiring manual human intervention for each interaction. Many modern devices and connected platforms, from smart home ecosystems to enterprise software, feature these hidden programmatic interfaces. As AI agents evolve, their ability to independently discover, understand, and utilize these endpoints opens up powerful — and at times, unanticipated — possibilities for automation. This capability transforms AI from a mere data processor into an active participant in digital ecosystems, facilitating unprecedented levels of integration and operational efficiency, but also raising considerations about data privacy, security, and the potential for unintended automated actions.

Chain of Thought: Structured Reasoning for AI

The human brain can effortlessly answer simple questions, but for complex problems, a step-by-step approach is often necessary. Consider a riddle involving a farmer with chickens and cows totaling 40 heads and 120 legs; solving it requires breaking the problem down into intermediate algebraic equations. In the realm of Artificial Intelligence, Chain of Thought (CoT) reasoning applies this principle to Large Language Models (LLMs).

CoT is a prompting technique that encourages LLMs to articulate their reasoning process, breaking down a complex problem into a series of logical, intermediate steps. Instead of simply providing a final answer, the model generates a sequence of thoughts that lead to that conclusion. While this process might extend the time required to generate an answer, it significantly enhances the quality and accuracy of the output, particularly for tasks involving logic, mathematical calculations, or code generation. Reasoning models, often developed from traditional LLMs and optimized through techniques like reinforcement learning, are specifically trained to excel at this kind of step-by-step thinking. This method helps mitigate the tendency of LLMs to "hallucinate" or provide incorrect answers, making them more reliable for critical applications. The development of CoT reasoning marks a significant step towards making AI systems more transparent, verifiable, and robust in their problem-solving capabilities, mirroring human cognitive processes more closely.

Coding Agents: Automating the Software Development Lifecycle

Coding agents represent a specialized subset of AI agents, focused entirely on the domain of software development. While a general AI agent can perform a variety of tasks, a coding agent is engineered to autonomously execute actions step-by-step to achieve programming goals. This goes far beyond merely suggesting code snippets for a human developer to review and integrate.

A sophisticated coding agent can independently write, test, and debug code, tackling the iterative, trial-and-error work that traditionally consumes a significant portion of a developer’s day. These agents can operate across entire codebases, identifying bugs, running comprehensive test suites, and even pushing fixes with minimal human oversight. They function much like an exceptionally fast and tireless intern, capable of maintaining focus and working continuously. However, much like any intern, human supervision remains critical; a human developer is still essential for reviewing the agent’s work, ensuring quality, adherence to architectural principles, and alignment with broader project goals. The advent of coding agents promises to significantly enhance developer productivity, accelerate software delivery cycles, and potentially reshape the nature of programming work itself, allowing human developers to focus on higher-level design and architectural challenges.

Compute: The Engine of AI Progress

"Compute" is a somewhat encompassing term, but it fundamentally refers to the vital computational power that enables AI models to function, train, and deploy. This processing capability is the lifeblood of the modern AI industry, providing the raw horsepower necessary to handle the massive datasets and complex algorithms that characterize contemporary AI systems.

Often used as shorthand, "compute" refers to the specialized hardware that provides this power. This includes Graphics Processing Units (GPUs), Central Processing Units (CPUs), Tensor Processing Units (TPUs), and other custom-designed AI accelerators. These components, particularly GPUs which excel at parallel processing, form the bedrock infrastructure upon which the entire AI ecosystem is built. The demand for compute resources has skyrocketed with the increasing size and complexity of AI models, leading to intense competition among tech giants and AI labs. Access to sufficient compute is a critical determinant of an organization’s ability to innovate, train cutting-edge models, and deploy AI solutions at scale. The significant energy consumption associated with large-scale compute operations also highlights environmental considerations and the growing need for more energy-efficient hardware and algorithms.

Deep Learning: Multi-Layered Neural Networks for Complex Patterns

Deep learning is a powerful subset of machine learning, characterized by AI algorithms structured as multi-layered artificial neural networks (ANNs). This architectural design, inspired by the interconnected pathways of neurons in the human brain, allows these algorithms to identify and learn highly complex patterns and correlations within vast datasets, far beyond the capabilities of simpler machine learning models like linear regressions or decision trees.

A key advantage of deep learning algorithms is their ability to automatically extract and identify important features from raw data, eliminating the need for human engineers to hand-craft these features. The multi-layered structure facilitates hierarchical learning, where each layer learns to detect different levels of abstraction in the data, from basic edges in an image to complex object representations. Furthermore, deep learning systems are designed to learn from their errors and iteratively improve their outputs through processes of repetition and adjustment, often leveraging backpropagation algorithms. However, this sophistication comes with demands: deep learning models typically require immense volumes of data (often millions or more data points) to achieve optimal results, and their training can be computationally intensive and time-consuming, leading to higher development costs. Despite these challenges, deep learning has driven breakthroughs in areas like image recognition, natural language processing, and medical diagnostics, forming the foundation for many generative AI applications.

Diffusion: The Art of Restoration from Noise

Diffusion models represent a groundbreaking technological advancement at the core of many contemporary generative AI systems, particularly those capable of creating realistic images, music, and even text. The concept draws inspiration from physics, where diffusion describes a spontaneous and irreversible process, such as sugar dissolving in coffee. In the AI context, diffusion systems are trained to slowly "destroy" the structure of data—whether it’s an image, an audio clip, or a text sequence—by progressively adding noise until the original data is indistinguishable.

The ingenuity lies in the "reverse diffusion" process. While physical diffusion is irreversible, AI diffusion models learn to reverse this degradation. Through extensive training, the model learns how to gradually remove the noise, step by step, to reconstruct the original data. This learned "reverse diffusion" capability is then used to generate entirely new data. By starting with random noise and applying the learned reverse process, the model can synthesize novel outputs that resemble the data it was trained on. This technique has revolutionized generative AI, enabling the creation of highly realistic and diverse content, powering applications from sophisticated art generators like Stable Diffusion and Midjourney to advanced video and audio synthesis tools. The ability to control the generation process by guiding the noise removal also allows for creative manipulation, such as editing images or transforming styles.

Distillation: Compressing Knowledge into Leaner Models

Distillation is a sophisticated technique employed in AI to transfer knowledge from a larger, more complex "teacher" model to a smaller, more efficient "student" model. This process involves the student model learning to approximate the behavior and outputs of the teacher model. Developers achieve this by sending requests to the powerful teacher model and recording its responses. These outputs, sometimes cross-referenced with a ground-truth dataset for accuracy, then serve as the training data for the student model.

The primary benefit of distillation is the creation of smaller, faster, and less computationally expensive models without a significant loss in performance. This is particularly valuable for deploying AI on devices with limited resources or for reducing inference costs in large-scale applications. For example, it is widely believed that OpenAI utilized distillation to develop GPT-4 Turbo, a version of GPT-4 optimized for speed and efficiency. While all major AI companies employ distillation internally for their own product optimization, its application also raises ethical and legal considerations. Distillation from a competitor’s API or chat assistant, if not explicitly permitted, often violates terms of service, leading to potential disputes as seen in instances where companies investigate improper use of their AI models. Distillation is a critical technique for making advanced AI more accessible and practical for a wider range of applications.

Fine-tuning: Specializing General AI Models

Fine-tuning is a crucial post-training process where a pre-existing AI model, often a large language model (LLM) that has undergone extensive general training, is further optimized for a more specific task or domain. This involves feeding the model additional, highly specialized, and task-oriented data that was not a primary focus during its initial broad training.

The objective of fine-tuning is to enhance the model’s performance, accuracy, and relevance for a particular application, sector, or user group. Many AI startups and enterprises leverage powerful foundational models as a starting point, then fine-tune them with proprietary or domain-specific datasets—such as legal documents, medical literature, or customer service logs—to build commercial products tailored to niche markets. This approach significantly reduces the time and computational resources required compared to training a model from scratch. Fine-tuning allows models to internalize the specific jargon, nuances, and patterns of a particular field, making them far more effective for specialized tasks. For example, an LLM fine-tuned on medical texts can provide more accurate and contextually relevant responses to health-related queries than a general-purpose LLM. This iterative process of specialization is key to unlocking AI’s utility across a diverse range of vertical applications.

GAN (Generative Adversarial Network): The Adversarial Path to Realism

A Generative Adversarial Network, or GAN, is a sophisticated machine learning framework that has been instrumental in the development of generative AI, particularly in producing highly realistic synthetic data. This framework operates on an ingenious principle involving a pair of neural networks: a "generator" and a "discriminator," locked in a continuous adversarial competition.

The generator’s role is to create new data, drawing upon its training data to produce outputs such as images, audio, or text. These generated outputs are then passed to the discriminator. The discriminator’s function is to evaluate these outputs, distinguishing between real data (from its training set) and synthetic data produced by the generator. Essentially, the two models are programmed to try and outperform each other. The generator continuously refines its ability to produce outputs that are indistinguishable from real data, striving to "fool" the discriminator. Concurrently, the discriminator enhances its capability to detect artificially generated data. This structured, competitive process drives both networks to improve, ultimately optimizing the AI’s outputs to become remarkably realistic, often without the need for additional human intervention during the generation phase. While GANs have demonstrated impressive capabilities in narrower applications, such as generating photorealistic faces or transforming image styles, they typically excel less in broader, general-purpose AI tasks compared to newer models like diffusion networks. Their development, however, was a significant milestone in generative AI, laying groundwork for the creation of deepfake technologies and other synthetic media.

Hallucination: The AI’s Fictional Narratives

"Hallucination" is the widely adopted term within the AI industry to describe instances where AI models, particularly Large Language Models (LLMs), generate information that is factually incorrect, nonsensical, or entirely fabricated, presenting it as truth. This phenomenon poses a significant challenge to AI quality and reliability, directly impacting the trustworthiness and utility of generative AI outputs.

The consequences of AI hallucinations can range from merely misleading to dangerously harmful. Imagine an AI providing incorrect legal advice, generating false medical diagnoses, or fabricating historical events; such errors could lead to severe real-life risks and adverse outcomes. The problem is generally thought to arise from gaps or biases in the vast datasets on which AI models are trained, leading the models to "confidently" generate plausible-sounding but erroneous information. As a response to this critical issue, there is a growing industry push towards developing increasingly specialized and "vertical" AI models. These domain-specific AIs are trained on narrower, highly curated datasets, which reduces the likelihood of knowledge gaps and, consequently, the incidence of hallucinations. Strategies like Retrieval-Augmented Generation (RAG) are also being developed to ground LLMs in verified external knowledge bases, further mitigating disinformation risks and enhancing the factual accuracy of AI-generated content. Addressing hallucinations is a central focus for responsible AI development, aiming to build systems that are not only creative but also reliable and verifiably accurate.

Inference: Putting AI Models to Work

Inference is the critical process of running a trained AI model to make predictions, draw conclusions, or generate outputs from new, previously unseen data. It is the practical application phase of an AI model, distinct from its training phase. To be clear, inference cannot occur without prior training; a model must first learn patterns and relationships within a dataset before it can effectively extrapolate from that learning to interpret new inputs.

During inference, the AI model processes incoming data and, based on the statistical patterns it learned during training, produces a result. For example, an image recognition model performs inference when it identifies an object in a new photograph, or an LLM performs inference when it generates a response to a user’s prompt. The efficiency of inference is paramount for real-world applications, as it directly impacts response times and user experience. While various types of hardware, from smartphone processors to high-end GPUs and custom AI accelerators, can perform inference, their capabilities vary significantly. Very large, complex models would take an impractical amount of time to generate predictions on a standard laptop compared to the rapid processing achievable on a cloud server equipped with specialized AI chips. Optimizing inference speed and cost is a major area of focus for AI developers and infrastructure providers, ensuring that AI services can scale and deliver timely results to users.

Large Language Model (LLM): The Core of Generative AI

Large Language Models (LLMs) are the foundational AI models powering many popular AI assistants and generative applications, including ChatGPT, Claude, Google’s Gemini, Meta’s Llama, Microsoft Copilot, and Mistral’s Le Chat. When users interact with these AI chatbots, they are directly engaging with an LLM, which processes their requests and generates responses, often augmented by various tools like web browsing or code interpreters.

LLMs are essentially deep neural networks of immense scale, composed of billions, or even trillions, of numerical parameters (or "weights"). Through extensive training on colossal datasets of text and code—billions of books, articles, websites, and transcripts—these models learn the intricate statistical relationships between words, phrases, and concepts. They construct a sophisticated, multi-dimensional representation of language, mapping semantic connections and grammatical structures. When prompted, an LLM generates the most statistically probable sequence of tokens (words or sub-word units) that fits the input, effectively predicting the next best word. This probabilistic approach allows them to generate coherent, contextually relevant, and often highly creative text, ranging from answering questions and summarizing documents to writing poetry and generating code. The development of LLMs has marked a turning point in AI, democratizing access to powerful language generation capabilities and profoundly impacting how humans interact with information and technology. However, their vastness also contributes to challenges like "hallucinations" and the potential for bias embedded in their training data.

Memory Cache: Accelerating AI Inference

Memory cache, specifically in the context of AI, refers to an essential optimization technique designed to significantly boost the efficiency and speed of AI inference—the process by which an AI model generates a response to a user’s query. AI operations are computationally intensive, involving vast numbers of mathematical calculations. Each time these calculations are performed, they consume computational power. Caching is engineered to reduce this repetitive computational load.

The core principle of caching is to store frequently accessed data or previously computed results in a fast-access memory area so that they can be retrieved quickly for subsequent user queries or operations, rather than being recalculated from scratch. This dramatically cuts down on the number of computations a model needs to run. One prominent type of memory caching is KV (Key-Value) caching, which is particularly relevant for transformer-based models, the architecture underpinning most modern LLMs. KV caching improves efficiency by storing the "key" and "value" representations of past tokens during sequence generation. When the model needs to generate the next token, it can reuse the cached keys and values from previous tokens, reducing redundant computations and accelerating the generation of answers. This optimization is critical for enabling faster response times, higher token throughput, and more scalable AI services, directly impacting the user experience and the economic viability of large-scale AI deployments.

Neural Network: The Brain-Inspired Algorithm

A neural network is the multi-layered algorithmic architecture that forms the foundational backbone of deep learning and, by extension, the recent explosion in generative AI tools, especially Large Language Models. Its design draws inspiration from the complex, interconnected pathways of neurons in the human brain.

While the conceptual idea of emulating biological neural networks for data processing dates back to the 1940s with models like the McCulloch-Pitts neuron, and later Rosenblatt’s Perceptron in the 1950s, the true potential of this theory was largely unlocked in recent decades. This resurgence was primarily fueled by the dramatic advancements in graphical processing hardware (GPUs), originally developed for the video game industry. GPUs proved exceptionally well-suited to handle the massive parallel computations required to train neural networks with many more layers—known as "deep" networks—than was previously feasible. These multi-layered structures allow neural networks to learn hierarchical representations and identify increasingly abstract patterns within vast datasets. This capability has led to transformative breakthroughs across numerous domains, including highly accurate voice recognition, sophisticated image classification, autonomous navigation systems, and accelerating drug discovery. Neural networks are essentially complex mathematical functions that learn to map input data to desired outputs by adjusting the "weights" and "biases" between their interconnected nodes, enabling AI systems to achieve unprecedented performance across a diverse array of tasks.

Open Source: The Collaborative Path in AI Development

"Open source" refers to software, and increasingly AI models, where the underlying code or model weights are made publicly available. This transparency allows anyone to freely inspect, use, modify, and distribute the technology. In the AI domain, Meta’s Llama family of models serves as a prominent example of this approach, drawing parallels to historical successes like the Linux operating system in the world of computing.

The open-source philosophy fosters a collaborative environment where researchers, developers, and companies worldwide can build upon each other’s work. This accelerates progress by reducing redundant efforts and encouraging collective innovation. Crucially, open-source models enable independent safety audits and rigorous scrutiny of their inner workings, which is a significant advantage over "closed source" systems. Closed-source models, such as OpenAI’s proprietary GPT models, keep their code and internal architecture private; users can interact with the product but cannot examine or modify its core components. The distinction between open-source and closed-source development has become one of the defining and most vigorously debated topics in the AI industry. Proponents of open source emphasize its role in democratizing AI access, fostering transparency, and potentially mitigating risks through collective vigilance, while advocates for closed source often cite competitive advantage, controlled deployment, and responsible development under proprietary guidance.

Parallelization: Simultaneous Processing for AI Scale

Parallelization is a fundamental computational strategy that involves executing multiple tasks or sub-tasks simultaneously, rather than sequentially. Conceptually, it’s akin to having a large team of employees working concurrently on different components of a project, rather than one employee completing each step individually. In the context of AI, parallelization is absolutely critical for both the training of complex models and the efficient execution of inference.

Modern GPUs, the hardware backbone of the AI industry, are specifically designed to perform thousands of calculations in parallel. This inherent capability is a primary reason why GPUs became indispensable for training deep neural networks, which involve vast numbers of simultaneous mathematical operations. As AI systems continue to grow in complexity and models become exponentially larger, the ability to distribute and parallelize computational work across numerous chips and even entire clusters of machines has become one of the most significant factors determining the speed, scalability, and cost-effectiveness of building and deploying AI models. Research into more advanced parallelization strategies is now a dedicated field of study, focusing on optimizing data distribution, communication protocols, and algorithmic design to maximize computational throughput. Effective parallelization is essential for meeting the ever-increasing demands of AI development and deployment, enabling faster iteration, larger models, and more responsive AI services.

RAMageddon: The AI-Induced Memory Shortage

RAMageddon is a recently coined, somewhat dramatic, term reflecting a very real and pressing trend in the tech industry: an intensifying global shortage of Random Access Memory (RAM) chips. RAM is a critical component powering virtually all modern electronic devices, from smartphones and gaming consoles to enterprise servers and, crucially, AI data centers. The unprecedented surge in demand for high-performance RAM, primarily driven by the burgeoning AI industry, is creating a significant supply bottleneck.

The largest technology companies and leading AI research labs are aggressively acquiring vast quantities of RAM to fuel the training and inference of their increasingly massive and complex AI models. This immense demand is outstripping existing manufacturing capacity, leaving a dwindling supply for other sectors and driving up prices. Industries outside of cutting-edge AI are feeling the pinch profoundly. The gaming sector, for instance, has seen console manufacturers compelled to increase prices due to the escalating cost and scarcity of memory chips. The consumer electronics market faces potential disruptions, with memory shortages projected to contribute to the largest dip in smartphone shipments in over a decade. General enterprise computing is also affected, as companies struggle to procure sufficient RAM for their own data centers and cloud infrastructure. Analysts predict that this surge in prices and supply constraints will likely persist until manufacturing capacity can catch up with AI-driven demand, a resolution that shows little sign of materializing in the immediate future. RAMageddon underscores the broad economic ripple effects of the AI revolution, impacting supply chains and product costs across the entire technology landscape.

Reinforcement Learning: Learning Through Trial and Reward

Reinforcement learning (RL) is a dynamic paradigm for training AI systems where an agent learns to make optimal decisions by interacting with an environment. Unlike supervised learning, which relies on labeled datasets, RL systems learn through a process of trial and error, receiving "rewards" for desirable actions and "penalties" for undesirable ones. This process is analogous to training a pet with treats; the "pet" in this scenario is a neural network, and the