For years, the pursuit of truly self-improving artificial intelligence systems has been a foundational aspiration within the AI research community, a future where machines learn and evolve with minimal human intervention. This vision, once confined to theoretical discussions and long-term roadmaps, is now taking tangible steps towards realization. With unprecedented levels of investment flowing into a new generation of research-driven AI laboratories, resources are more abundant than ever for tackling such ambitious goals. Among these burgeoning "neolabs," Adaption, a firm led by former Cohere VP of AI Research Sara Hooker, has made a significant stride, introducing a novel product designed to dramatically streamline the AI training and fine-tuning process.

On Wednesday, October 22, 2025, Adaption publicly launched AutoScientist, an innovative platform engineered to enable AI models to rapidly acquire specific capabilities through an automated approach to conventional fine-tuning. While the underlying techniques hold broad applicability across numerous domains, the Adaption team is particularly focused on its transformative potential for accelerating and simplifying the often arduous process of training and refining frontier-level AI models. This development arrives at a critical juncture in the AI landscape, where the "scaling race" – the relentless pursuit of larger models with more parameters – is often accompanied by exorbitant computational costs and extensive human expertise requirements.

The Vision of Autonomous AI Development

The concept of AI systems improving themselves better than humans could has long been a holy grail for researchers. Early theoretical frameworks in machine learning hinted at the possibility, but practical implementation remained elusive due to computational limitations, data scarcity, and the inherent complexity of guiding model evolution. Over the last decade, advancements in deep learning, particularly with the advent of transformer architectures and large language models (LLMs), have brought this goal closer to the fore. The ability of models to learn from vast datasets has sparked renewed interest in meta-learning and automated machine learning (AutoML) techniques. AutoScientist, according to Adaption, represents a sophisticated evolution of these principles, moving beyond mere hyperparameter optimization to a more holistic co-optimization of both data and model parameters.

Sara Hooker, co-founder and CEO of Adaption, whose distinguished career includes a tenure as VP of AI research at Cohere, articulated the profound implications of AutoScientist. "What’s super exciting about it is that it co-optimizes both the data and the model, and learns the best way to basically learn any capability," Hooker explained in an interview with TechCrunch. Her statement underscores a paradigm shift: rather than a human painstakingly iterating on data selection and model adjustments, AutoScientist is designed to intelligently navigate this complex optimization landscape. This approach, she suggests, could finally "allow for successful frontier AI trainings outside of these labs," a reference to the handful of well-funded, resource-rich organizations currently dominating the frontier AI development space.

Addressing the Bottlenecks in AI Training

The development of advanced AI models, particularly those operating at the "frontier" of current capabilities, is an extraordinarily resource-intensive endeavor. It typically involves several key stages:

- Pre-training: Training a foundational model on massive, diverse datasets, often costing tens or hundreds of millions of dollars in compute alone.

- Fine-tuning: Adapting the pre-trained model to specific tasks or domains using smaller, more specialized datasets. This stage is crucial for making general-purpose models practically useful.

- Evaluation and Iteration: Rigorously testing the model’s performance and repeatedly adjusting data, architectures, and training parameters to improve results.

The fine-tuning and iteration phases are often the most time-consuming and expertise-dependent. Data curation, selection, and labeling are notorious bottlenecks. Researchers often spend weeks or months experimenting with different datasets, learning rates, model configurations, and loss functions. The human element introduces variability, potential biases, and limits the pace of iteration. This manual, iterative process becomes a significant barrier for smaller organizations, academic institutions, and even well-funded startups that lack the vast teams of AI specialists and computational infrastructure of the industry giants.

Industry estimates suggest that while initial large model training can cost upwards of $100 million for state-of-the-art models, the subsequent fine-tuning and deployment for specific enterprise applications can still incur costs ranging from hundreds of thousands to several millions of dollars, alongside significant time investments. A report from a prominent tech consultancy firm earlier this year indicated that the average time from initial concept to deployment for a specialized AI model in an enterprise setting is currently around 9-18 months, with fine-tuning accounting for a substantial portion of this timeline. Tools like AutoScientist aim to drastically compress these timelines and reduce the associated costs.

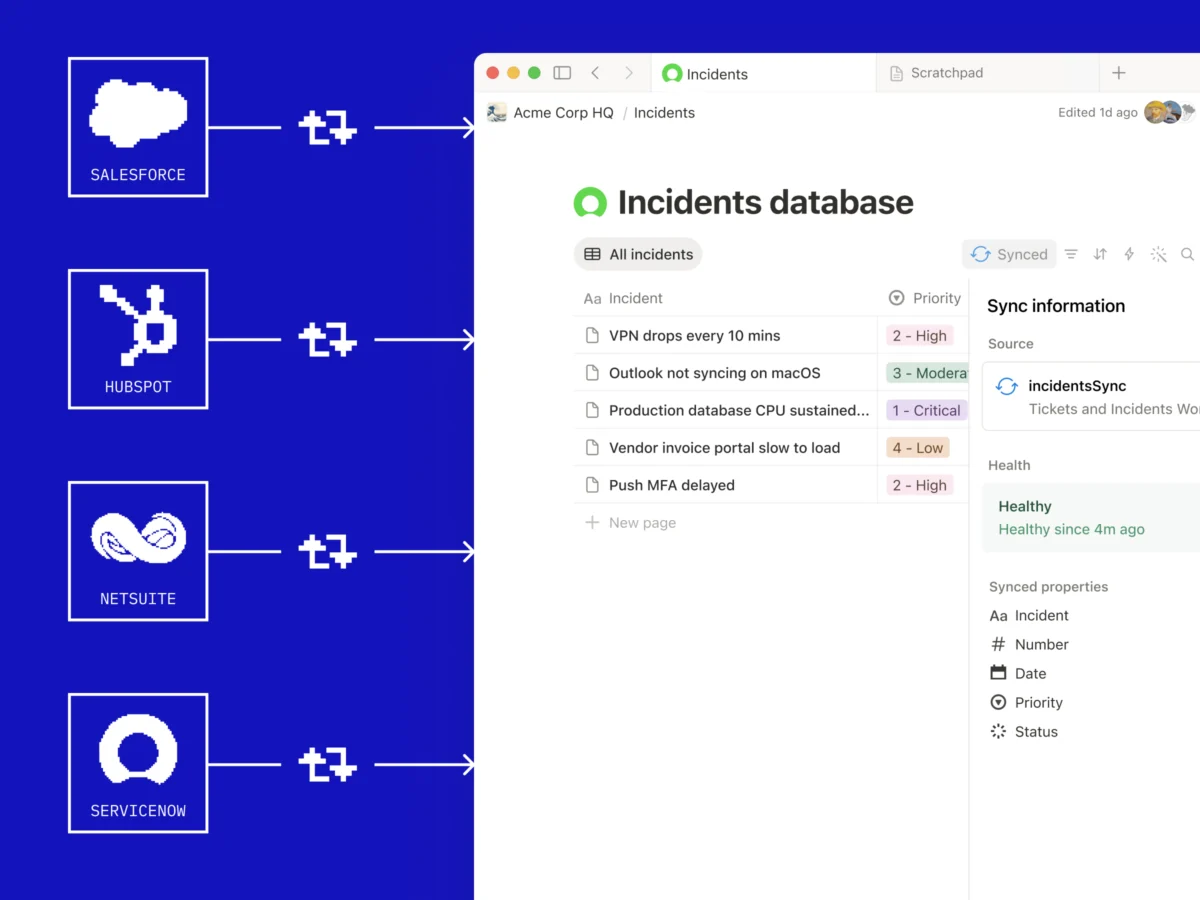

Adaption’s Integrated Approach: From Data to Model

AutoScientist is not a standalone innovation but rather builds upon Adaption’s existing data-centric offering, Adaptive Data. Launched earlier, Adaptive Data addresses the fundamental challenge of building and maintaining high-quality datasets, which are the lifeblood of any effective AI model. It focuses on making it easier to construct continuously improving datasets over time, learning from interactions and feedback loops. AutoScientist is designed to seamlessly integrate with this data foundation, turning those evolving, high-quality datasets into continuously improving AI models.

"Our view at Adaption is that the whole stack should be completely adaptable, and should basically optimize on the fly to whatever task you have," Hooker emphasized. This holistic philosophy suggests a departure from traditional modular AI development, where data pipelines and model architectures are often optimized independently. Instead, Adaption envisions a symbiotic relationship where the data and the model learn and adapt in concert, driven by the specific task at hand. This co-optimization process, while conceptually complex, promises greater efficiency and more robust outcomes by ensuring that data quality directly informs model learning, and model performance feeds back into data curation strategies.

Performance Claims and Benchmarking Challenges

In its initial launch materials, Adaption has highlighted compelling performance metrics for AutoScientist, claiming that the system has more than doubled "win-rates" across various models. While such numbers are undeniably impressive on the surface, they also present a unique challenge for conventional interpretation. The nature of AutoScientist – tailoring models to highly specific tasks and continuously adapting – means that traditional, generalized benchmarks like SWE-Bench for code generation or ARC-AGI for abstract reasoning are not directly applicable. These benchmarks are designed to evaluate broad capabilities, whereas AutoScientist’s strength lies in highly specialized, dynamic optimization.

The difficulty in comparing these "win-rates" to established industry benchmarks underscores a broader issue in the rapidly evolving field of AI evaluation. As models become more specialized and adaptive, the industry needs to develop new methodologies for assessing their real-world efficacy. Potential avenues for future validation could include rigorous user studies, task-specific competitive evaluations against human baselines or other automated fine-tuning tools, and transparent reporting of the specific contexts and datasets under which these "win-rates" were achieved. Despite the immediate challenge of contextualization, Adaption remains confident that users will experience a tangible difference once they integrate AutoScientist into their workflows. To facilitate this experiential validation, the company is offering the tool free of charge for the first 30 days post-release.

Broader Implications: Democratization and Innovation

The potential implications of AutoScientist extend far beyond mere efficiency gains. Sara Hooker draws a parallel between AutoScientist and the advent of code generation tools. "The same way that code generation unlocked a lot of tasks, this is going to unlock a lot of innovation at the frontier of different fields," she stated. This comparison is insightful: code generation democratized access to software development by lowering the barrier for entry, enabling non-experts to create functional code. Similarly, AutoScientist could democratize access to advanced AI development, empowering a wider array of organizations and individuals to leverage frontier AI models without requiring an army of specialized researchers or a multi-million dollar compute budget.

This "democratization of frontier AI" could have several profound impacts:

- Accelerated Research and Development: Smaller startups, academic research labs, and even individual developers could rapidly prototype and deploy highly specialized AI solutions, fostering a more diverse and innovative ecosystem. This could lead to breakthroughs in niche applications that large labs might overlook due to their focus on general-purpose models.

- Reduced Economic Barriers: By automating and optimizing the fine-tuning process, AutoScientist could significantly lower the cost of developing and maintaining high-performance AI models, making advanced AI more accessible to businesses of all sizes.

- Enhanced Industry Competition: A level playing field in AI development could stimulate greater competition, encouraging more rapid innovation and preventing the monopolization of advanced AI capabilities by a few dominant players.

- New Applications and Industries: With easier access to fine-tuned AI, new applications in fields like personalized medicine, advanced materials science, environmental monitoring, and hyper-customized educational tools could emerge more quickly.

The current AI investment climate reflects this optimism. Billions of dollars have poured into AI companies in the past few years, with a particular emphasis on infrastructure, foundational models, and tools that enhance developer productivity. Adaption, as a "neolab" backed by significant venture capital, fits squarely into this narrative, promising not just another model, but a tool to accelerate the creation and deployment of all models. Their approach of focusing on the "whole stack" – from data to model optimization – resonates with the growing understanding that AI success is as much about the ecosystem and tooling as it is about raw model size.

The Road Ahead for Self-Improving Systems

While AutoScientist represents a significant step, the journey towards truly autonomous, self-improving AI is ongoing. Future iterations of such tools will likely incorporate even more sophisticated meta-learning capabilities, enabling them to generalize optimization strategies across vastly different tasks and domains. The ethical considerations surrounding increasingly autonomous AI development also remain paramount. Questions about accountability, bias propagation in self-optimizing loops, and the control mechanisms for such systems will need careful consideration as these technologies mature.

For now, Adaption’s AutoScientist offers a compelling glimpse into a future where the creation of specialized, high-performance AI is no longer the exclusive domain of a select few. By intelligently automating the intricate dance between data and model, Adaption is not just building a product; it is contributing a crucial piece to the puzzle of how humanity will interact with and guide the next generation of intelligent machines. The coming months will reveal how widely AutoScientist is adopted and how profoundly it reshapes the landscape of AI development, potentially ushering in an era of unprecedented innovation across countless fields.