The technological landscape witnessed a seismic shift this week as Amazon Web Services (AWS) officially announced the integration of OpenAI’s cutting-edge models, including its latest foundational AI offerings, the code-writing service Codex, and a new product for creating OpenAI-powered AI agents, into its Bedrock service. This pivotal development follows closely on the heels of a revised agreement between OpenAI and its primary investor and cloud partner, Microsoft, which effectively rescinded Microsoft’s previously held exclusive rights to certain OpenAI products. The strategic pivot immediately intensifies the already fierce competition in the burgeoning artificial intelligence sector and the underlying cloud infrastructure market.

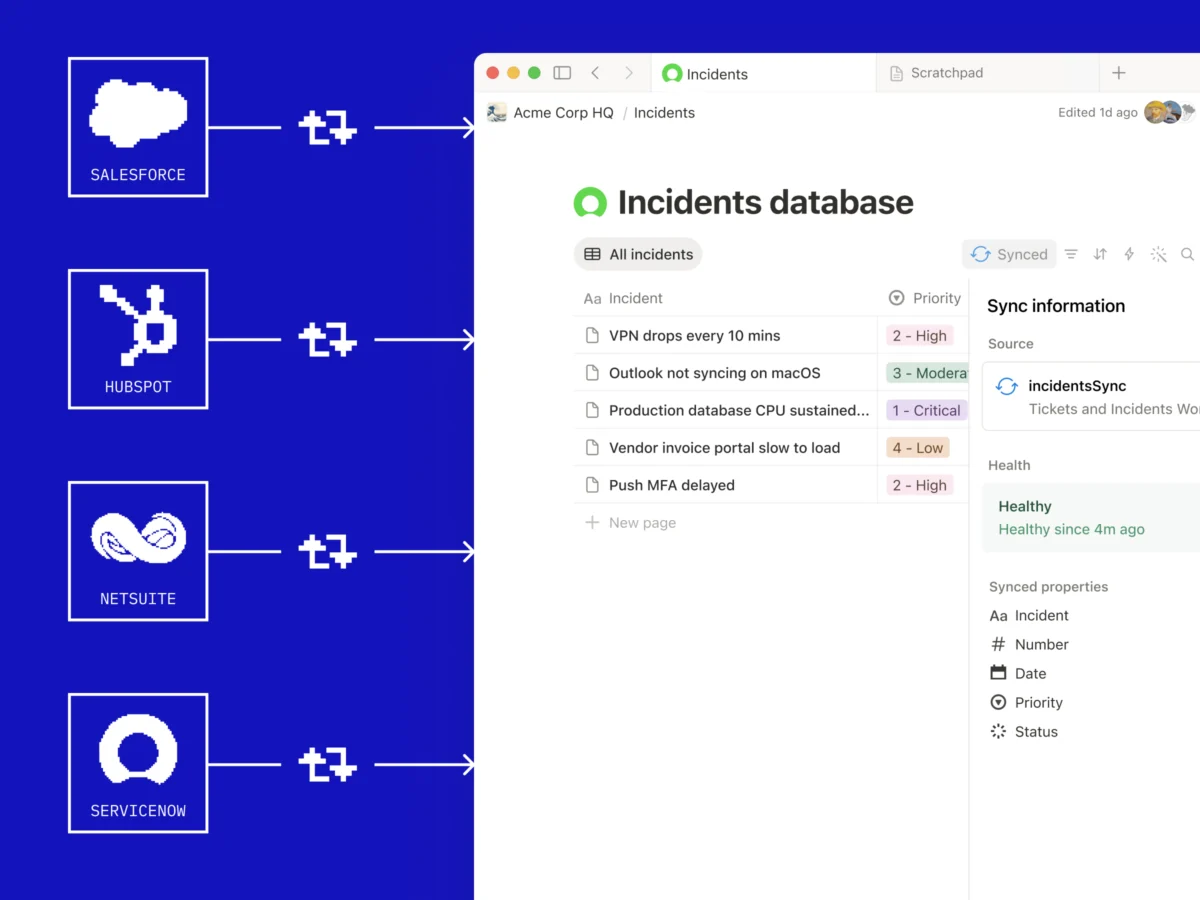

The announcement from Amazon, made on Tuesday, April 29, 2026, underscored a strategic triumph for the cloud computing giant, positioning AWS Bedrock as a central hub for developers seeking access to a diverse array of leading AI models. Bedrock, Amazon’s fully managed service designed to simplify the development of generative AI applications, now provides enterprises with direct access to OpenAI’s sophisticated reasoning models. This includes a newly introduced service, "Bedrock Managed Agents," specifically tailored to leverage OpenAI’s capabilities for complex task automation, offering advanced features like agent steering and enhanced security protocols. Amazon’s official blog post heralded this move as "the beginning of a deeper collaboration between AWS and OpenAI," a declaration that resonates with significant implications across the industry.

A Decisive End to Exclusivity: Reshaping the AI Partnership Paradigm

The catalyst for Amazon’s swift action was a critical update to the OpenAI-Microsoft partnership agreement, disclosed on Monday, April 28, 2026. This revision removed the exclusivity clauses that had previously granted Microsoft unique access and deployment rights for many of OpenAI’s advanced models. For years, this exclusivity had been a cornerstone of Microsoft’s strategy to dominate the enterprise AI market through its Azure cloud platform, making it a formidable competitive barrier.

The moment the revised agreement was public, Amazon CEO Andy Jassy took to social media, remarking in a tweet that it was a "very interesting announcement." This understated comment belied the profound strategic importance of the development for Amazon, which had been eager to integrate OpenAI’s models into its own cloud ecosystem. The previous contractual arrangement had presented a significant hurdle for AWS, particularly after OpenAI finalized an "up-to-$50-billion deal" with Amazon earlier in the year, a deal widely understood to involve substantial compute credits and infrastructure support from AWS. This massive investment signaled OpenAI’s intention to diversify its infrastructure providers and reduce its reliance on a single partner, a move that was seemingly at odds with Microsoft’s exclusive rights. The recent agreement revision effectively cleared the path for this diversification to fully materialize, allowing OpenAI to leverage AWS’s global infrastructure and extensive customer base.

The Genesis of a Strategic Partnership: Microsoft and OpenAI’s Early Alliance

To fully appreciate the significance of this week’s developments, it is crucial to revisit the origins and evolution of the Microsoft-OpenAI partnership. Microsoft’s involvement with OpenAI dates back several years, with initial investments totaling $1 billion in 2019, followed by further substantial funding rounds, reportedly reaching into the tens of billions of dollars. This strategic alliance was forged during a period when OpenAI was transitioning from a non-profit research organization to a "capped-profit" entity, seeking significant capital to fund its ambitious large language model (LLM) research and development.

For Microsoft, the partnership was a masterstroke. It provided the Redmond-based software giant with early, preferential access to some of the most advanced AI models in the world, including the GPT series, which rapidly became synonymous with cutting-edge generative AI. This exclusive access allowed Microsoft to integrate OpenAI’s capabilities deeply into its Azure cloud services, Office suite, Bing search engine, and a myriad of other products, offering a distinct competitive advantage against rivals like Google and Amazon. The strategy was clear: leverage OpenAI’s technological prowess to differentiate Azure as the premier cloud for AI workloads and infuse AI into its vast enterprise software ecosystem. This deep integration, coupled with massive compute resources provided by Azure, enabled OpenAI to scale its training operations and push the boundaries of AI research.

The exclusivity clauses within their agreement were a critical component, designed to ensure Microsoft reaped maximum strategic benefits from its substantial investment. They effectively locked out Microsoft’s primary cloud competitors, particularly AWS and Google Cloud, from directly offering OpenAI’s flagship models to their customers. This arrangement made Azure the de facto home for many enterprises looking to build applications powered by OpenAI’s technology.

Amazon’s Calculated Ascent in the AI Race

While Microsoft was building its exclusive bridge to OpenAI, Amazon was pursuing a somewhat different, albeit equally ambitious, AI strategy. AWS, the undisputed leader in cloud computing infrastructure, had long offered its own suite of AI/ML services, most notably Amazon SageMaker for machine learning development and deployment, and various specialized AI services for tasks like natural language processing, computer vision, and forecasting. However, the rise of powerful foundational models from OpenAI and others presented a new challenge and opportunity.

Amazon’s initial approach to foundational models focused on developing its own internal models, such as Titan, and providing a platform, Bedrock, that offered choice by integrating various third-party models alongside its own. This "model of models" strategy was designed to cater to diverse customer needs without being beholden to a single provider. The challenge, however, was the immense market demand for OpenAI’s highly publicized and performing models. The up-to-$50-billion deal between OpenAI and Amazon earlier this year was a clear indicator of OpenAI’s desire to expand its infrastructure footprint beyond Azure and Amazon’s determination to offer these in-demand models to its vast customer base. This deal, primarily structured around compute credits, effectively made AWS a significant infrastructure provider for OpenAI, creating a contractual tension that necessitated a re-evaluation of the Microsoft-OpenAI exclusivity terms.

With the removal of Microsoft’s exclusive rights, Amazon swiftly moved to capitalize. The integration of OpenAI models into Bedrock means that AWS customers can now access and fine-tune models like GPT-4, use Codex for sophisticated code generation, and deploy the new Bedrock Managed Agents directly within the AWS ecosystem. This not only enhances Bedrock’s appeal as a comprehensive AI development platform but also ensures that AWS remains competitive in attracting and retaining AI-centric workloads. For developers and enterprises, this translates into greater flexibility and choice, allowing them to leverage OpenAI’s capabilities on the cloud platform they already utilize or prefer.

Intensifying the Cloud Wars: AI as the New Battleground

The ramifications of this shift are profound, particularly for the fiercely competitive cloud computing market. For years, AWS and Microsoft Azure have been locked in a battle for market share, with Google Cloud Platform also vying for a significant slice. AI has emerged as the latest, and perhaps most critical, battleground. Microsoft’s exclusive access to OpenAI’s models had given Azure a distinct advantage in attracting generative AI startups and enterprise customers. Now, with OpenAI models available on AWS, the playing field has leveled considerably.

This development will likely lead to intensified competition for AI workloads. AWS can now aggressively market its Bedrock service with the full spectrum of OpenAI models, appealing to customers who prefer Amazon’s ecosystem for its breadth of services, global reach, or existing infrastructure investments. Microsoft, while still deeply integrated with OpenAI and retaining significant influence, will need to redouble its efforts to differentiate Azure AI through other means, such as specialized services, enterprise-grade security features, and its own proprietary models.

Industry analysts predict that this move could accelerate multi-cloud strategies among enterprises, as companies seek to diversify their AI model providers and cloud infrastructure to avoid vendor lock-in and optimize for performance and cost. The ability to access OpenAI models on both Azure and AWS provides enterprises with unprecedented flexibility in designing their AI architectures.

The "Deteriorating" Relationship and Strategic Diversification

The recent developments also shed light on what has been widely reported as a "deteriorating" relationship between Microsoft and OpenAI. While both companies have publicly maintained a strong partnership, industry whispers and strategic actions have suggested a growing strain. OpenAI, driven by its mission to develop advanced AI for the benefit of all humanity, appears keen to avoid over-reliance on a single commercial partner, even one as significant as Microsoft. Its expansion to AWS and reported discussions with Oracle for additional compute infrastructure underscore a deliberate strategy of diversification.

Concurrently, Microsoft has not put all its AI eggs in the OpenAI basket. The Redmond giant has significantly invested in and partnered with Anthropic, a prominent competitor to OpenAI and the developer of the Claude series of foundational models. Microsoft’s reported efforts to develop its own agent offerings powered by Claude further illustrate its strategy of hedging bets and building a robust, multi-faceted AI portfolio. This dual-pronged approach ensures that Microsoft remains a leader in AI regardless of the evolving dynamics of its partnership with OpenAI. The competition among AI model providers is also heating up, with companies like Google (Gemini), Meta (Llama), and a host of startups pushing the boundaries. This competitive environment encourages diversification for both model developers and cloud providers.

Broader Impact and Future Implications

The integration of OpenAI models into AWS Bedrock represents more than just a commercial deal; it is a significant inflection point for the broader AI ecosystem.

- Increased Developer Choice and Innovation: Developers now have more options for deploying OpenAI models, potentially fostering greater innovation as they can leverage the strengths of different cloud platforms. This could lead to a more vibrant and competitive landscape for AI application development.

- Democratization of Advanced AI: By making these powerful models accessible on multiple leading cloud platforms, the barriers to entry for companies wanting to build with advanced generative AI are lowered. This could accelerate the adoption of AI across various industries.

- Shift in Cloud Provider Strategies: Cloud providers will increasingly focus on value-added services around foundational models, such as fine-tuning capabilities, data management, security, and developer tools, rather than just exclusive model access.

- Evolving Partnership Models: The incident highlights the dynamic nature of strategic partnerships in the fast-moving AI sector. Exclusive deals, while initially beneficial, may become restrictive as the technology matures and market demands evolve. Future partnerships might lean more towards collaboration and interoperability rather than strict exclusivity.

- Focus on AI Agents: The introduction of "Bedrock Managed Agents" specifically leveraging OpenAI’s reasoning models signals a growing emphasis on autonomous AI agents capable of performing complex, multi-step tasks. This frontier of AI development is expected to revolutionize business processes and customer interactions.

As the "beginning of a deeper collaboration" unfolds, the industry will closely watch how AWS and OpenAI leverage this partnership to push the boundaries of AI innovation and how Microsoft responds to this new competitive landscape. The race for AI dominance is far from over, and this week’s developments merely underscore that the most compelling chapters are still being written. The future of AI will be characterized by both intense competition and strategic collaboration, ultimately benefiting developers and enterprises seeking to harness the transformative power of artificial intelligence.