The burgeoning landscape of artificial intelligence development has been rocked by a significant security incident, as a highly insidious malware was discovered embedded within an open-source project from LiteLLM, a prominent Y Combinator graduate. This event, unfolding like a plotline from a tech-satire series, has exposed critical vulnerabilities within the open-source supply chain and ignited intense scrutiny over the efficacy of third-party security compliance certifications, particularly those provided by the AI-powered startup Delve.

The Discovery and Nature of the Attack

The malware’s presence was first brought to light by Callum McMahon, a research scientist at FutureSearch, a company specializing in AI agents for web research. McMahon’s investigation was prompted by an anomalous system shutdown on his machine shortly after he downloaded LiteLLM. This seemingly minor technical glitch proved to be a critical early warning sign, leading him to meticulously trace the root cause and subsequently document and disclose the malicious code.

The attack vector was identified as a "dependency" – a common vulnerability point where open-source software relies on other external open-source packages to function. In this instance, the malware, once activated, demonstrated a sophisticated credential-harvesting capability. It systematically stole login credentials from every system it touched, using these compromised details to gain unauthorized access to an ever-expanding network of open-source packages and accounts. This lateral movement strategy allowed the malware to propagate, continuously harvesting more credentials in a cascading effect, posing a significant threat to the integrity and security of countless developer environments and projects.

Ironically, the malware’s own shoddy design played a role in its swift detection. According to McMahon and echoed by famed AI researcher Andrej Karpathy, the bug that caused McMahon’s machine to crash was a symptom of poorly engineered code, leading both to conclude that the malware was likely "vibe coded"—a colloquial term suggesting development done hastily and without rigorous quality control or security considerations. This oversight, while detrimental to the attackers’ operational stealth, proved to be a stroke of luck for the wider developer community, as it led to an unusually rapid discovery.

LiteLLM: A Rising Star Under Siege

LiteLLM, the project at the center of this incident, has rapidly ascended to prominence within the AI development community. Its core offering provides developers with a streamlined, unified API to access hundreds of diverse AI models, alongside essential features like spend management. This functionality has made it an indispensable tool for many, leading to astonishing adoption rates. According to Snyk, a cybersecurity firm actively monitoring the incident, LiteLLM has seen download figures as high as 3.4 million times per day. The project’s popularity is further underscored by its robust presence on GitHub, boasting over 40,000 stars and thousands of forks—instances where developers have adapted the base code for their own customized applications. This widespread integration meant that the potential impact of the malware was substantial, threatening a vast ecosystem of dependent projects and developers.

The company, a graduate of the highly influential Y Combinator accelerator program, represents the vanguard of innovation in the rapidly evolving AI sector. Its rapid growth and deep integration into the developer workflow underscore both the immense potential and the inherent risks associated with modern software development, particularly in the open-source domain where shared components are both a strength and a potential Achilles’ heel.

The Open-Source Supply Chain Vulnerability

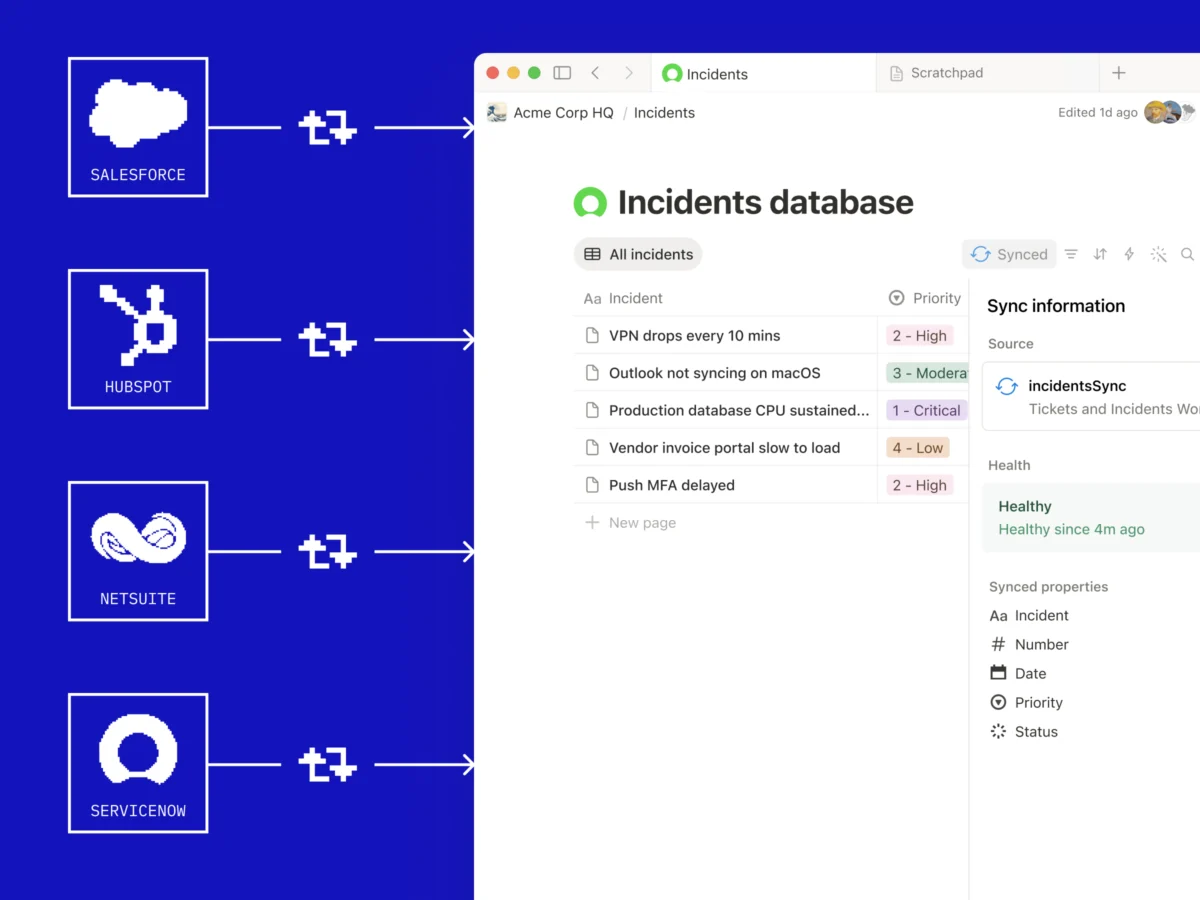

This incident serves as a stark reminder of the inherent vulnerabilities within the modern open-source software supply chain. Contemporary software development heavily relies on modular components, often pulling in dozens, if not hundreds, of third-party libraries and packages. While this modularity accelerates development and fosters innovation, it also introduces a complex web of dependencies. A malicious actor needs only to compromise a single, widely used dependency to inject malware into a vast array of downstream projects, as was the case with LiteLLM.

The "dependency confusion" attack vector, where an attacker registers a private package name on a public registry to trick build tools into downloading their malicious version, is a well-documented threat. While the specifics of LiteLLM’s compromise are still under forensic investigation, the general mechanism of malware slipping in through a dependency highlights a systemic challenge. Developers often trust that widely used or officially sanctioned open-source components are secure, but the sheer volume and dynamic nature of these ecosystems make comprehensive vetting incredibly challenging. This incident reinforces the need for robust security practices throughout the entire software development lifecycle, including rigorous dependency scanning, integrity checks, and a "shift left" approach to security, integrating it from the earliest stages of development.

Rapid Response and Remediation Efforts

Upon the discovery and disclosure of the malware, the LiteLLM development team initiated an immediate and intensive response. Working non-stop, they focused on rectifying the situation, pushing out critical updates, and providing guidance to their user base. The good news for the affected community is that the malware was detected and addressed with remarkable speed, likely within hours of its initial propagation. This rapid response is crucial in mitigating potential damage from such attacks, limiting the window of opportunity for attackers to exploit compromised credentials and data.

LiteLLM CEO Krrish Dholakia acknowledged the severity of the situation and the company’s commitment to transparency and remediation. In a statement to TechCrunch, he emphasized, "Our current priority is the active investigation alongside Mandiant. We are committed to sharing the technical lessons learned with the developer community once our forensic review is complete." This collaboration with a leading cybersecurity firm like Mandiant indicates a serious commitment to understanding the full scope of the breach and implementing comprehensive preventative measures. The promise to share lessons learned is vital for the broader open-source community, allowing other projects to benefit from LiteLLM’s experience and fortify their own defenses.

The Compliance Conundrum: Delve and Security Certifications

Adding another layer of complexity and controversy to the incident is the revelation surrounding LiteLLM’s security compliance certifications. As of March 25th, LiteLLM’s website prominently displayed that it had achieved two major security compliance certifications: SOC 2 and ISO 27001. These certifications are widely recognized benchmarks, indicating that a company has robust security policies and controls in place to protect customer data and maintain operational integrity.

However, the irony and public outcry stemmed from the fact that LiteLLM obtained these certifications through Delve, an AI-powered compliance startup that is itself embroiled in significant controversy. Delve, also a Y Combinator alumnus, has been publicly accused of misleading its customers regarding their true compliance conformity. Allegations include generating fake data to meet audit requirements and utilizing auditors who "rubber stamp" reports without thorough scrutiny. While Delve has denied these allegations, the proximity of these two narratives has fueled considerable discussion and skepticism within the tech community.

It’s crucial to understand the nuanced role of such certifications. SOC 2 and ISO 27001 are designed to affirm that an organization has established and adheres to sound security policies and processes, not that it is impervious to every possible cyberattack. Specifically, SOC 2 Type 2 reports evaluate the effectiveness of controls over a period, and often include provisions related to vendor management and software dependencies. Therefore, while a certification does not guarantee immunity from malware, it implies that processes should be in place to detect, prevent, and respond to such incidents, particularly those stemming from dependencies.

The situation has led to pointed commentary from industry experts. Gergely Orosz, a well-known engineer, articulated the sentiment circulating online: "Oh damn, I thought this WAS a joke… but no, LiteLLM really was ‘Secured by Delve.’" This reaction highlights the erosion of trust when compliance mechanisms, intended to instill confidence, become entangled in allegations of dubious practices. The incident raises profound questions about the integrity of third-party compliance services and the due diligence required when selecting such partners.

Broader Implications for AI and Tech Security

This incident carries significant implications for the rapidly expanding AI industry and the broader tech landscape. First, it underscores the critical need for enhanced security measures in AI development. As AI models become more integrated into critical infrastructure and business operations, the attack surface expands, making robust security not just an option but a paramount necessity. The reliance on open-source components, while fostering innovation, introduces inherent risks that require proactive management and continuous vigilance.

Second, the controversy surrounding Delve casts a shadow over the booming market for AI-driven compliance solutions. While AI promises to streamline complex processes like security audits, this incident suggests that the human element of ethical oversight and rigorous validation remains indispensable. The allure of automated compliance must be balanced with genuine, verifiable security practices. Companies seeking certifications must perform their own due diligence on the auditors and compliance platforms they choose, rather than simply relying on the certificate itself.

Third, the event may prompt a reevaluation of how fast-growing startups, especially those accelerated by programs like Y Combinator, approach security and compliance. The rapid pace of innovation often prioritizes speed to market, but this incident serves as a stark reminder that security cannot be an afterthought. Integrating security by design from the outset, rather than bolting it on later, is essential for building resilient and trustworthy platforms.

Expert Reactions and Community Concerns

Beyond the immediate technical response, the incident has sparked widespread discussion across developer forums, social media platforms like X, and cybersecurity communities. The "vibe coding" aspect, while humorous in its description, points to a serious underlying issue: the pressure to ship quickly in the startup world can sometimes lead to shortcuts in security and quality assurance. While not a direct cause of the malware, the sloppiness of the malicious code itself suggests a broader context where even attackers might be operating under similar pressures or lack of expertise.

The involvement of a Y Combinator-backed project and a Y Combinator-backed compliance provider has also drawn attention to the ecosystem’s internal dynamics. While Y Combinator provides invaluable support and acceleration for startups, the incident highlights that even within such a prestigious network, security vulnerabilities and ethical concerns can emerge. The tech community is keenly watching how both LiteLLM and Delve navigate these challenges, as their responses will likely set precedents for future incidents.

Looking Ahead: Lessons Learned and Future Challenges

The LiteLLM malware incident, coupled with the Delve compliance controversy, presents a multi-faceted learning opportunity for the tech industry. For developers, it reinforces the critical importance of scrutinizing dependencies, implementing supply chain security best practices, and staying abreast of emerging threats. Tools for dependency scanning, software bill of materials (SBOM) generation, and automated vulnerability detection will likely see increased adoption.

For companies, the event underscores the need for a holistic security strategy that goes beyond mere compliance checkboxes. Real security requires continuous monitoring, incident response planning, and a culture that prioritizes security at every level. Furthermore, the selection of third-party vendors, especially those dealing with critical functions like security compliance, demands thorough vetting and ongoing oversight.

As the AI revolution accelerates, the complexity of software systems will only grow, making robust security practices more challenging but also more critical than ever. The lessons from LiteLLM’s experience will undoubtedly contribute to a more secure future for AI development, even as the industry grapples with the inherent tension between rapid innovation and unwavering security. The full forensic review by LiteLLM and Mandiant is eagerly awaited, promising further insights into this intricate episode and actionable intelligence for the global developer community.