AI coding company Cursor found itself at the center of a swirling discussion this week following the launch of its new model, Composer 2, which it boldly promoted as offering "frontier-level coding intelligence." However, the ambitious announcement was swiftly met with a revelation from an astute X user, Fynn, who claimed Composer 2 was essentially a customized version of Kimi 2.5, an open-source model recently released by Chinese AI firm Moonshot AI. This discovery ignited a debate about transparency, the nature of innovation in the rapidly evolving AI landscape, and the underlying geopolitical tensions shaping the industry.

The Unveiling of Composer 2 and the Subsequent Revelation

On the heels of its much-anticipated launch, Cursor, a well-funded U.S. startup specializing in AI-powered coding assistants, touted Composer 2 as a significant leap forward in AI-driven software development. The company’s blog post highlighted the model’s advanced capabilities, positioning it as a powerful tool designed to enhance developer productivity and code quality. The language used was indicative of a proprietary breakthrough, suggesting extensive in-house research and development had culminated in this "frontier-level" offering.

Yet, the narrative began to unravel shortly after the launch. An independent developer and X user, posting under the handle Fynn, conducted an analysis of Composer 2’s underlying code. Fynn’s investigation quickly pointed to an unexpected origin: identifiers within the model’s architecture seemed to reference "Kimi," specifically Kimi 2.5. "Just Kimi 2.5 with additional reinforcement learning," Fynn asserted in a post that quickly gained traction, adding a pointed jab, "[A]t least rename the model ID." The evidence, primarily embedded code snippets, suggested that Cursor’s "frontier-level" model was built directly upon an existing open-source foundation, a fact notably absent from Cursor’s initial promotional materials. This discovery sent ripples through the AI community, prompting questions about the extent of Cursor’s originality and its communication strategy.

Cursor’s Response and Clarification: Acknowledging the Open-Source Base

The swift and public nature of Fynn’s revelation compelled Cursor to respond. Lee Robinson, Cursor’s Vice President of Developer Education, took to X to address the claims, confirming the model’s open-source lineage. "Yep, Composer 2 started from an open-source base!" Robinson acknowledged, validating Fynn’s findings. However, he quickly moved to clarify the extent of Cursor’s contribution, stating, "Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training." This statement aimed to underscore the significant effort Cursor had invested in refining and enhancing the base model.

Robinson further elaborated that Composer 2’s performance on various industry benchmarks was "very different" from Kimi’s, implying that Cursor’s extensive training and reinforcement learning had substantially transformed the original Kimi 2.5 model into something distinct and superior. This explanation introduced the nuanced distinction between adopting an open-source foundation and simply repackaging an existing model, emphasizing the value-add derived from subsequent training and fine-tuning. The debate then shifted from a simple accusation of rebranding to a discussion about the computational resources and intellectual property invested in transforming a base model into a commercial product.

The Role of Moonshot AI and the Open-Source Ecosystem

The open-source base in question, Kimi 2.5, hails from Moonshot AI, a prominent Chinese artificial intelligence company. Moonshot AI has garnered significant attention and investment, notably from tech giants like Alibaba and venture capital firms such such as HongShan (formerly Sequoia China). The release of Kimi 2.5 as an open-source model earlier this year positioned Moonshot AI as a key player in the global AI landscape, contributing to the growing repository of accessible AI models. Open-source models, by their nature, allow developers and companies worldwide to access, modify, and build upon existing AI frameworks, fostering collaboration and accelerating innovation across the industry.

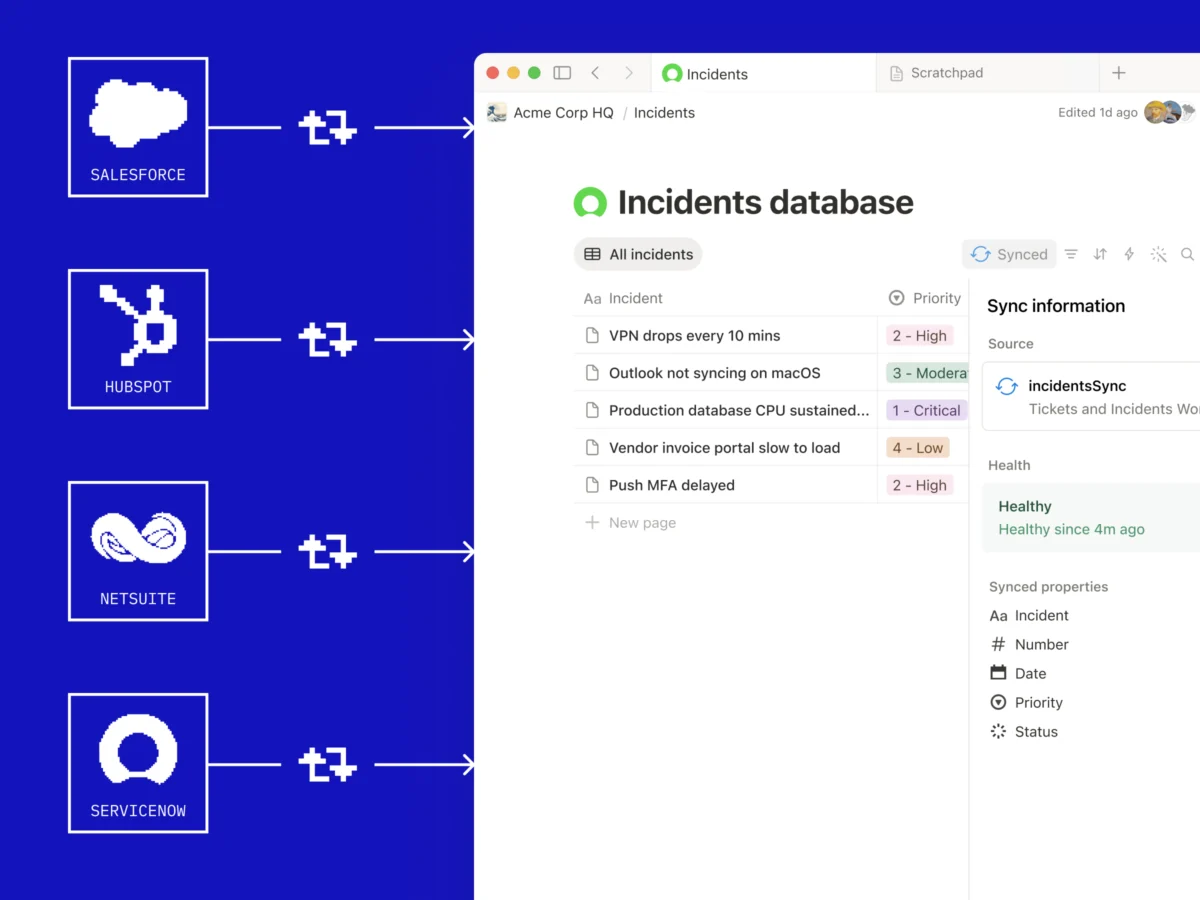

Crucially, Lee Robinson also insisted that Cursor’s use of Kimi 2.5 was entirely consistent with the terms of its license. This assertion was subsequently corroborated by the official Kimi account on X. In a post congratulating Cursor, the Kimi account stated that Cursor utilized Kimi "as part of an authorized commercial partnership with Fireworks AI." Fireworks AI, a platform known for facilitating the deployment and management of large language models, seemingly played an intermediary role, ensuring the licensing and usage were legitimate. "We are proud to see Kimi-k2.5 provide the foundation," the Kimi account declared, further adding, "Seeing our model integrated effectively through Cursor’s continued pretraining & high-compute RL training is the open model ecosystem we love to support." This statement from Moonshot AI not only validated Cursor’s claims of proper licensing but also highlighted the collaborative spirit often celebrated within the open-source community, where advancements are built upon shared foundations.

A High-Stakes Environment: Funding, Valuation, and the AI Race

The controversy takes on added significance when considering Cursor’s financial standing and market perception. The U.S. startup is not a small, nascent venture; it is a well-capitalized entity that garnered substantial investor confidence in late 2025. Last fall, Cursor successfully raised a staggering $2.3 billion in a funding round, pushing its valuation to an impressive $29.3 billion. Furthermore, the company has reportedly surpassed $2 billion in annualized revenue, underscoring its rapid growth and perceived leadership in the AI coding assistant market. For a company with such significant financial backing and market valuation to build its "frontier-level" model on an open-source base, particularly one from a Chinese competitor, naturally raised eyebrows and questions about the definition of proprietary innovation.

This incident underscores a broader trend in the AI industry: even well-funded startups are increasingly leveraging open-source foundations rather than building every component from scratch. The sheer computational cost and specialized expertise required to train large language models (LLMs) from the ground up are immense, often running into tens or hundreds of millions of dollars. For many companies, it makes strategic and economic sense to take an existing high-performing open-source model, such as Kimi 2.5, and then invest their resources in fine-tuning, specialized training (like reinforcement learning for coding tasks), and custom data sets to achieve a differentiated product. This approach allows companies to accelerate development, reduce upfront costs, and focus on niche applications, but it also necessitates clear communication about the origins of their technology to maintain transparency and trust.

The Geopolitical Undercurrents of AI Development

Perhaps the most sensitive aspect of this revelation lies in the geopolitical context. The so-called "AI arms race" is often framed as an existential competition between the United States and China, with both nations vying for technological supremacy. This rivalry encompasses everything from chip manufacturing and quantum computing to the development of advanced AI models. In this highly charged atmosphere, a U.S. startup leveraging technology from a prominent Chinese AI firm can be perceived through a complex lens.

Examples of this tension are plentiful. The U.S. government has implemented various export controls and sanctions aimed at limiting China’s access to advanced AI chips and technology, citing national security concerns. Conversely, Chinese companies are actively working to develop indigenous alternatives and push the boundaries of their own AI capabilities. The "apparent panic" in Silicon Valley following the release of competitive models by Chinese companies like DeepSeek early last year illustrates the competitive intensity. In such an environment, the initial omission of Moonshot AI and Kimi from Cursor’s announcement could be interpreted as an attempt to navigate these delicate geopolitical waters, avoiding potential scrutiny or negative perception associated with leveraging Chinese technology, despite its open-source nature and authorized usage. The desire to project an image of pure, homegrown innovation might have outweighed the impulse for full disclosure, at least initially.

Transparency and Trust: Acknowledging the "Miss"

Ultimately, Cursor co-founder Aman Sanger publicly addressed the oversight, acknowledging the company’s misstep. "It was a miss to not mention the Kimi base in our blog from the start," Sanger admitted in a post on X, promising, "We’ll fix that for the next model." This statement signaled a commitment to greater transparency moving forward, recognizing the importance of clear communication with their user base and the broader AI community.

The incident highlights a critical challenge for AI companies: balancing the desire to present a unique and advanced product with the ethical and practical imperative of transparency, especially when leveraging open-source components. In a field as dynamic and rapidly evolving as AI, where models are often built upon layers of previous research and development, clear attribution is vital for fostering a healthy ecosystem of innovation. The expectation of users and the industry is increasingly leaning towards full disclosure, not only to give credit where credit is due but also to build trust and provide clarity on the actual extent of a company’s proprietary contributions.

Implications for the Future of AI Development

The Cursor-Kimi episode offers several key takeaways for the future of AI development:

- The Power of Open Source: It reaffirms the critical role of open-source models in accelerating AI innovation globally. Companies, regardless of their funding, can leverage these foundational models to build specialized applications more efficiently.

- The Nuance of "Innovation": The debate underscores that innovation in AI is not solely about creating models from scratch but also about the ability to effectively fine-tune, adapt, and apply existing models to specific problems, often requiring significant computational and intellectual investment.

- Transparency as a Core Value: The initial lack of disclosure and subsequent public acknowledgment emphasize the growing importance of transparency in the AI industry. Companies are expected to be forthright about the origins of their technology, especially when promoting "frontier-level" capabilities.

- Geopolitical Realities: The incident serves as a reminder that technological development in AI is inextricably linked to geopolitical dynamics, with national competitiveness and strategic considerations often influencing corporate communication and collaboration.

- Interconnected Ecosystem: Despite geopolitical tensions, the AI ecosystem remains remarkably interconnected. Authorized partnerships and the open-source paradigm enable cross-border collaboration, albeit sometimes with complex communication challenges.

As the AI landscape continues to mature, companies will likely face increasing scrutiny regarding their development practices and disclosures. The Cursor Composer 2 saga serves as a timely reminder that while rapid innovation is celebrated, it must be balanced with clear communication and a commitment to transparency to maintain trust and foster a robust, collaborative, and ethical AI future.