The rapid integration of generative artificial intelligence into the fabric of daily life has sparked an unprecedented global dialogue regarding the long-term trajectory of human civilization. While high-profile industry leaders and academic researchers frequently debate the existential risks associated with advanced machine intelligence, a new study suggests that the general American public remains largely optimistic about the technology’s role in society. According to research published in the Journal of Technology in Behavioral Science, the majority of individuals do not subscribe to extreme negative narratives, such as the "P(Doom)" scenario, which posits a high probability of artificial intelligence causing a catastrophic collapse of civilization or human extinction. Instead, attitudes toward AI appear to be deeply influenced by individual psychological profiles, social health, and prior familiarity with technological tools.

The study, led by researchers Rose E. Guingrich and Michael S. A. Graziano, arrives at a critical juncture in the evolution of digital ethics and public policy. As AI systems become more autonomous and capable of performing complex cognitive tasks, the dichotomy between "AI optimism"—the belief that AI will solve global crises and enhance productivity—and "P(Doom)"—the fear of an unaligned superintelligence—has become a focal point for both Silicon Valley and Washington D.C. The findings indicate that while the "doomsday" narrative receives significant media attention, it has not yet taken deep root in the collective psyche of the average U.S. resident.

The Evolution of the AI Sentiment Landscape

To understand the current state of public opinion, it is necessary to examine the timeline of AI’s recent ascent. The public release of ChatGPT in late 2022 served as a catalyst, moving AI from the realm of science fiction into a tangible tool used by millions for work, education, and entertainment. By early 2023, the discourse had shifted toward the potential risks of these systems. In May 2023, just one month before the data for this study was collected, the Center for AI Safety released a brief statement signed by hundreds of AI scientists and notable figures, asserting that "mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war."

This atmosphere of heightened expert concern provided the backdrop for Guingrich and Graziano’s investigation. Their goal was to determine whether this elite-level anxiety was mirrored in the general population or if the public viewed the technology through a more pragmatic or hopeful lens. By surveying 402 U.S. residents in June 2023, the researchers captured a snapshot of public sentiment at the height of the first major wave of generative AI adoption.

Methodology and Experimental Framework

The research utilized a multi-dimensional approach to assess how people interact with and perceive AI. Participants were recruited through Prolific, a platform known for providing high-quality samples for behavioral research. The demographic breakdown was representative of a broad range of the U.S. workforce, with 49% identifying as women and a primary age concentration between 25 and 44 years.

A key element of the study was its experimental design. Participants were randomly assigned to one of two conditions. The first group was required to engage in a 10-minute interaction with a popular AI chatbot—either ChatGPT, Replika, or Anima—before completing the survey. This interaction was designed to test whether direct experience with an AI agent would soften or harden a participant’s existing biases. The second group proceeded directly to the survey without a preceding AI interaction, serving as a control group.

The survey itself was comprehensive, utilizing several validated psychological instruments:

- The Affinity for Technology Interaction (ATI) Scale: To measure how comfortable and interested participants are in using new technology.

- The Ten Item Personality Inventory (TIPI): A shortened version of the Big Five personality test measuring openness, conscientiousness, extraversion, agreeableness, and neuroticism.

- The UCLA Loneliness Scale: To assess the degree of social isolation.

- The Perceived Social Competence Scale and Rosenberg Self-Esteem Scale: To gauge overall "social health."

By correlating these psychological markers with "P(Doom)" sentiments and "AI Optimism" scores, the researchers were able to build a profile of the typical AI optimist versus the typical AI skeptic.

Psychological Predictors of AI Sentiment

The most striking findings of the study involve the correlation between personality traits and AI attitudes. The data revealed that individuals who scored higher in "agreeableness"—a trait characterized by trust, altruism, and prosocial behavior—tended to view AI more favorably. These individuals were more likely to believe that AI would bring about positive changes in healthcare, scientific discovery, and general human prosperity.

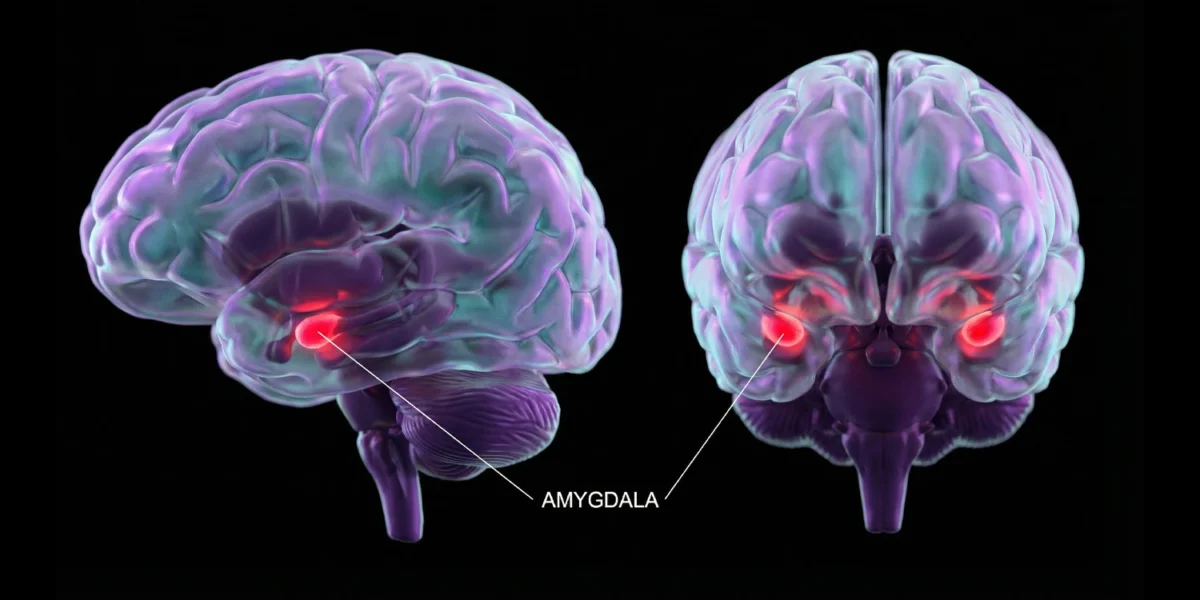

Conversely, "neuroticism"—the tendency to experience negative emotions such as anxiety, depression, and vulnerability—was a strong predictor of negative AI sentiment. Those with higher neuroticism scores were more likely to agree with statements regarding "P(Doom)" and expressed greater concern that AI might "take over the world" or cause irreversible harm. This suggests that the fear of AI may be part of a broader psychological predisposition toward risk aversion and anxiety regarding external threats.

Social health also played a significant role. The researchers found that individuals with higher social competence and self-esteem were more optimistic about the large-scale impact of AI. Interestingly, those who reported higher levels of loneliness were more likely to hold negative views of AI’s societal impact. This contradicts some earlier theories that lonely individuals might be more welcoming of AI as a form of surrogate companionship; in this study, lonely individuals remained skeptical of the technology’s broader benefits.

The Question of AI Companionship and Moral Rights

While general optimism prevailed regarding AI’s utility, the public remains deeply conflicted about the role of AI in personal relationships. When asked if AI agents like robots or digital voice assistants would make "good social companions," the responses were highly polarized. Although the majority still leaned toward disagreement, the distribution of scores was much more even than for general optimism questions. This suggests that while people value AI as a tool for productivity, they are hesitant to grant it a place in the human social sphere.

Furthermore, the study touched upon the burgeoning debate over "AI personhood" or moral rights. As AI systems become more sophisticated in their mimicry of human thought and emotion, some ethicists have argued for a framework of rights for digital entities. However, the study participants were sharply divided on this issue. There was no clear consensus on whether an AI should be protected from "harm" or if it deserves any form of moral consideration, reflecting a society that is still grappling with the definition of consciousness in the machine age.

Industry Context and Comparative Data

The findings of Guingrich and Graziano align with other recent market research, though they provide a more nuanced psychological explanation. For instance, a 2023 Pew Research Center report found that while Americans are increasingly concerned about the role of AI in daily life, they also recognize its potential in specific sectors like medicine. The "P(Doom)" study adds to this by showing that the "concern" cited in many polls is often a moderate caution rather than an existential dread.

The tech industry’s reaction to such public sentiment has been varied. Companies like OpenAI and Anthropic have leaned into the safety narrative, perhaps to get ahead of regulation, while others, like Meta, have championed a more open and optimistic approach to development. The fact that most Americans do not currently fear an AI-led apocalypse provides these companies with significant "social license" to continue innovation, provided they can maintain public trust through transparency and tangible benefits.

Implications for Policy and the Future

The study’s conclusion—that extreme negative attitudes are not the norm—has profound implications for policymakers. If the public were largely terrified of AI, there would be a stronger mandate for restrictive, "precautionary principle" style regulations. However, since the public is generally optimistic, the focus of regulation may instead shift toward specific, manageable risks such as job displacement, misinformation, and data privacy, rather than the prevention of a hypothetical "superintelligent" takeover.

However, the researchers caution that these views are not static. Because AI is evolving at an exponential rate, public sentiment is likely to shift in response to major events. A high-profile AI failure or a significant economic disruption could quickly turn current optimism into widespread skepticism. Conversely, as AI becomes more integrated into healthcare and education, providing clear and undeniable benefits, the remaining "P(Doom)" concerns may fade further into the background.

In the final analysis, the research by Guingrich and Graziano suggests that our views on AI are as much a reflection of ourselves as they are of the technology. Our social health, our personality, and our comfort with change dictate how we perceive the "black box" of artificial intelligence. As we move deeper into the 21st century, the challenge for society will be to balance this natural optimism with a rigorous, evidence-based approach to safety, ensuring that the "P(Doom)" scenarios remain confined to the realm of theoretical probability rather than becoming a reality.