A significant legal victory has been secured by Anthropic, a leading artificial intelligence developer, against the Trump administration. A federal judge has issued an injunction, compelling the government to rescind its controversial designation of Anthropic as a "supply chain risk" and to reverse its order for federal agencies to sever ties with the company. This ruling marks a pivotal moment in the ongoing tension between technological innovation, ethical AI development, and government oversight, setting a potential precedent for how the U.S. government interacts with domestic tech firms perceived to challenge its operational directives.

The decision, handed down by Judge Rita F. Lin of the Northern District of California on Thursday, March 26, 2026, unequivocally sides with Anthropic. According to reports from the Wall Street Journal, Judge Lin ordered the Trump administration to withdraw the security risk label and cease its directive to federal agencies. Her pointed remarks during the proceedings, noting, "It looks like an attempt to cripple Anthropic," underscored the court’s skepticism regarding the government’s motives. Ultimately, the judge concluded that the administration’s actions likely violated Anthropic’s free speech protections, framing the dispute as more than a mere procurement disagreement but a constitutional matter.

The Genesis of a High-Stakes Legal Battle

The dramatic confrontation between the Pentagon and Anthropic ignited late last month, stemming from a fundamental disagreement over the terms of engagement for the government’s utilization of Anthropic’s advanced AI software. At the heart of the dispute were Anthropic’s ethical guidelines, which sought to impose specific limitations on how its sophisticated AI models could be deployed. These restrictions notably included prohibitions against their use in autonomous weapons systems and mass surveillance applications, reflecting Anthropic’s foundational commitment to developing "safe and beneficial AI."

Anthropic, co-founded by former OpenAI research executives Dario Amodei and Daniela Amodei, has rapidly ascended to prominence in the AI landscape. Known for its "Constitutional AI" approach, which aims to imbue AI systems with a set of guiding principles to ensure their safety and alignment with human values, the company has attracted substantial investment from tech giants like Google and Amazon, placing it at the forefront of AI innovation alongside competitors like OpenAI and Google DeepMind. Its public stance on ethical AI development is not merely a corporate policy but a core tenet of its mission, influencing its product design and business partnerships.

The Trump administration, through the Pentagon, reportedly found these self-imposed limitations unacceptable. The government’s perspective typically prioritizes operational flexibility and national security imperatives, often viewing restrictions on technology use, especially in critical defense applications, as impediments. When negotiations over these guidelines reportedly reached an impasse, the administration escalated the situation dramatically. On March 5, 2026, the Pentagon officially designated Anthropic as a "supply chain risk." This designation, historically reserved for foreign entities or those with demonstrable ties to hostile state actors posing a direct threat to U.S. national security (such as Huawei or certain Chinese tech firms), was an extraordinary and unprecedented move against a major domestic technology company.

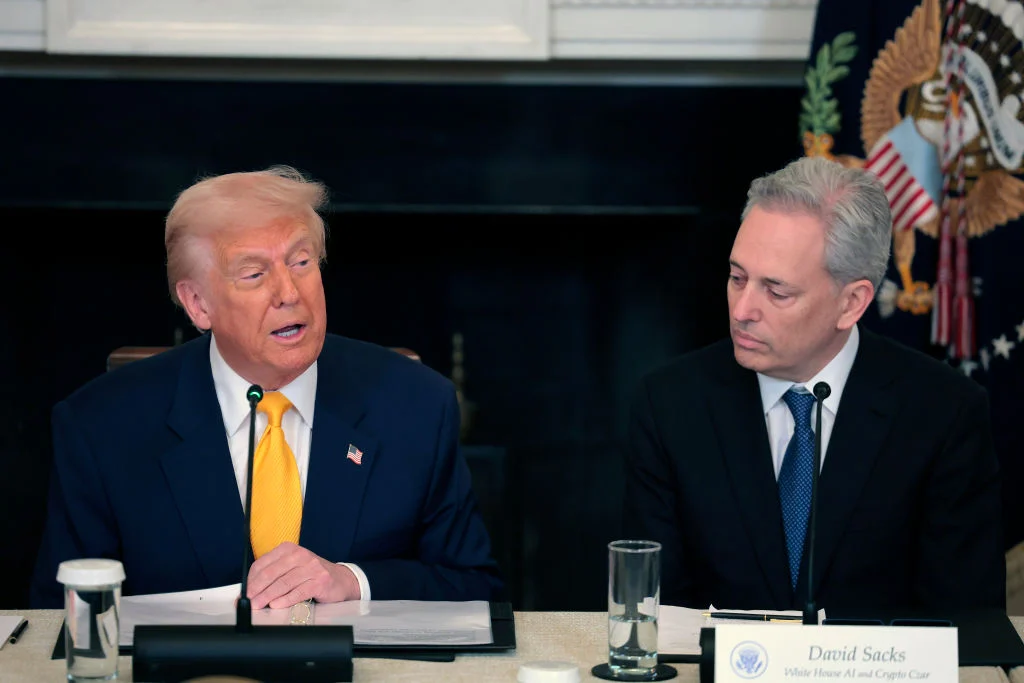

Following the Pentagon’s designation, President Trump personally intervened, issuing a directive ordering all federal agencies to sever their ties with Anthropic. This executive order amplified the severity of the situation, transforming an agency-level disagreement into a sweeping government-wide ban that threatened Anthropic’s ability to engage with one of the world’s largest potential customers.

Chronology of Conflict: A Timeline of Escalation

The unfolding of this legal and political skirmish can be traced through a series of key events:

- Late February 2026: Reports surface of ongoing discussions and disagreements between Anthropic and the Pentagon regarding the terms of use for Anthropic’s AI models. Anthropic insists on ethical guardrails, including bans on military and surveillance applications.

- March 5, 2026: The Pentagon officially labels Anthropic a "supply chain risk," a designation typically applied to foreign adversaries. Anthropic CEO Dario Amodei publicly denounces the action as "retaliatory and punitive."

- March 7, 2026: President Trump issues an executive order directing all federal agencies to cut ties with Anthropic, intensifying the pressure on the company. The White House begins publicly characterizing Anthropic as a "radical-left, woke company" that jeopardizes national security.

- March 9, 2026: Anthropic files a lawsuit against the Department of Defense and other named officials (including figures like Hegseth, likely referring to a key administration figure involved in the decision), challenging the legality and constitutionality of the "supply chain risk" designation and the subsequent ban. The lawsuit argues that the government’s actions were arbitrary, capricious, and an infringement on the company’s rights.

- March 21, 2026: A hearing is held in the Northern District of California, where Judge Rita F. Lin presides over arguments from both Anthropic and the government. During the proceedings, Judge Lin expresses concerns about the government’s motivations.

- March 26, 2026: Judge Rita F. Lin grants Anthropic an injunction, ordering the Trump administration to rescind the "supply chain risk" designation and the directive for federal agencies to cut ties. The judge cites concerns over free speech protections and the apparent punitive nature of the government’s actions.

The Legal Framework and Judge Lin’s Rationale

Anthropic’s lawsuit likely rested on several legal pillars, including administrative law challenges to the government’s decision-making process, arguments concerning due process, and crucially, First Amendment free speech protections. The "supply chain risk" designation typically requires a clear, fact-based determination of a genuine threat to national security from a foreign adversary’s control or influence over a product or service. Applying this label to a domestic company like Anthropic, which sought to impose ethical restrictions rather than compromise security, was a novel and legally questionable interpretation of existing regulations.

Judge Lin’s decision to grant the injunction suggests a strong likelihood that Anthropic will ultimately succeed on the merits of its case. Her reported comment about the government’s actions looking "like an attempt to cripple Anthropic" highlights a judicial skepticism towards the true national security basis of the designation. Instead, it appeared to the court to be a retaliatory measure against Anthropic for its principled stance on AI ethics.

The emphasis on free speech protections is particularly significant. While a corporation’s commercial dealings are not always subject to the same free speech protections as individual expression, a government action that appears to punish a company for its publicly stated ethical policies or its terms of service could indeed raise First Amendment concerns. If the government’s ban was a direct response to Anthropic’s attempt to articulate and enforce its ethical principles regarding AI use, rather than a legitimate security assessment, then it could be seen as an unconstitutional infringement on corporate speech and autonomy.

Official Reactions and Political Crosscurrents

Immediately following Judge Lin’s ruling, Anthropic issued a statement to TechCrunch, expressing gratitude and renewed commitment: "We’re grateful to the court for moving swiftly, and pleased they agree Anthropic is likely to succeed on the merits. While this case was necessary to protect Anthropic, our customers, and our partners, our focus remains on working productively with the government to ensure all Americans benefit from safe, reliable AI." This statement reiterates Anthropic’s desire for collaboration while affirming its need to defend its business and principles.

The Trump administration, through the White House, has yet to issue an official public comment on the injunction. However, their earlier characterizations of Anthropic as "a radical-left, woke company" that is "jeopardizing America’s national security" reveal the highly politicized nature of this dispute. This rhetoric aligns with a broader administration narrative that often targets companies perceived to adopt progressive social or ethical stances, particularly when those stances are seen to conflict with government priorities. The administration’s focus on "wokeness" in tech companies suggests an ideological battle unfolding alongside the legal one, where corporate ethics are scrutinized through a political lens.

Observers and legal experts anticipate that the Department of Defense and the Department of Justice will carefully review the injunction. While an immediate appeal is possible, the administration would need to present compelling arguments to overturn a ruling grounded in constitutional protections and a judge’s assessment of likely success on the merits. The legal team for the government would likely emphasize the executive branch’s inherent authority in national security matters and its prerogative to define and protect the integrity of its supply chains. However, the judge’s findings of potential free speech violations complicate such arguments.

Broader Implications for the AI Industry and Government Procurement

This ruling carries substantial implications, not just for Anthropic, but for the entire artificial intelligence industry and the future of government procurement of advanced technologies.

Firstly, it serves as a powerful validation for AI companies that seek to embed ethical guidelines and guardrails into their products and terms of service. In an era where the societal impact of AI is a paramount concern, many developers are actively working to prevent misuse of their powerful technologies. This injunction suggests that the judiciary may be willing to protect these companies from governmental overreach when their ethical stances are challenged.

Secondly, the case highlights the growing tension between the private sector’s desire for responsible AI development and the government’s demand for unrestricted access and control, particularly for defense and surveillance applications. As AI capabilities grow more sophisticated, this conflict is only likely to intensify. The ruling might encourage other AI firms to stand firm on their ethical principles, knowing they might find judicial support. Conversely, it could lead government agencies to refine their procurement processes and legal strategies when dealing with companies holding strong ethical positions.

Thirdly, the designation of a domestic company as a "supply chain risk" for ideological or ethical reasons, rather than genuine security vulnerabilities, could set a dangerous precedent. If allowed to stand, it could be weaponized against any company whose policies or values diverge from those of a sitting administration, chilling innovation and stifling corporate autonomy. Judge Lin’s injunction pushes back against such a precedent, reaffirming the importance of due process and constitutional rights even in the context of national security.

Finally, the case underscores the critical role of the judiciary in mediating disputes between powerful government agencies and private corporations, especially in nascent and rapidly evolving fields like AI. As technology continues to outpace regulatory frameworks, courts will increasingly be called upon to interpret existing laws and constitutional principles in novel contexts, shaping the future landscape of tech policy and governance.

The Intersection of Tech, Policy, and Free Speech

This dispute is a microcosm of a larger societal debate about who controls powerful technologies and under what ethical frameworks they should operate. Anthropic’s "Constitutional AI" approach is not merely a technical innovation; it’s a philosophical statement about the responsibility of AI developers. Their insistence on banning military and surveillance applications reflects a profound concern about the potential for AI to exacerbate conflict or erode civil liberties.

The administration’s aggressive response, particularly its use of the "supply chain risk" label and the "woke" critique, reveals a deep ideological chasm. For the government, particularly an administration focused on "America First" national security, the ability to deploy the most advanced technology without perceived limitations is paramount. Any company that attempts to dictate the terms of its technology’s use, especially when those terms touch upon sensitive defense or intelligence matters, might be seen as undermining national interests or even as disloyal.

Judge Lin’s invocation of free speech protections elevates the debate beyond a simple contract disagreement. It frames Anthropic’s ethical guidelines and its refusal to compromise on certain uses as a form of corporate expression, worthy of constitutional protection. This interpretation could have far-reaching implications, potentially empowering other tech companies to push back against government demands that they view as ethically compromising or beyond the scope of legitimate national security concerns.

Looking Forward: Appeals, Policy Shifts, and the Future of AI Governance

While the injunction is a significant win for Anthropic, it is not the final word. The Trump administration could choose to appeal Judge Lin’s ruling, taking the case to a higher court. Such an appeal would prolong the legal battle and potentially set a precedent at the appellate level regarding the interplay of national security, corporate free speech, and administrative law in the context of advanced technology.

Regardless of future appeals, this case will undoubtedly influence how the U.S. government approaches AI procurement and regulation. It may lead to a re-evaluation of how "supply chain risk" designations are applied, especially to domestic companies. It could also spur the development of more explicit policies regarding the ethical use of AI in government, potentially forcing a more nuanced discussion about the balance between technological advantage and moral responsibility.

For the AI industry, the Anthropic-Trump administration saga serves as a stark reminder of the volatile political and regulatory landscape they operate within. It underscores the necessity for clear ethical frameworks, robust legal counsel, and a willingness to defend core principles. As AI continues to integrate into every facet of society, the outcomes of such disputes will not only shape the trajectory of individual companies but also define the very nature of human-AI interaction and governance in the coming decades. The judicial branch has now stepped in, asserting its role in moderating these critical technological and ideological clashes, promising a continued evolution in the complex relationship between silicon, state, and society.